Fix Cloud Sync Timeout Errors — Resolve Transfer Failures with RcloneView

Nothing derails a critical backup like a timeout error at 95% completion.

Cloud sync timeout errors are among the most frustrating transfer failures users encounter. Whether you are uploading large datasets to Google Drive, syncing project folders to OneDrive, or backing up archives to S3, timeouts can halt progress and leave files in an inconsistent state. RcloneView provides built-in timeout management, automatic retries, and transfer monitoring that help you push through unstable connections and complete every sync job reliably.

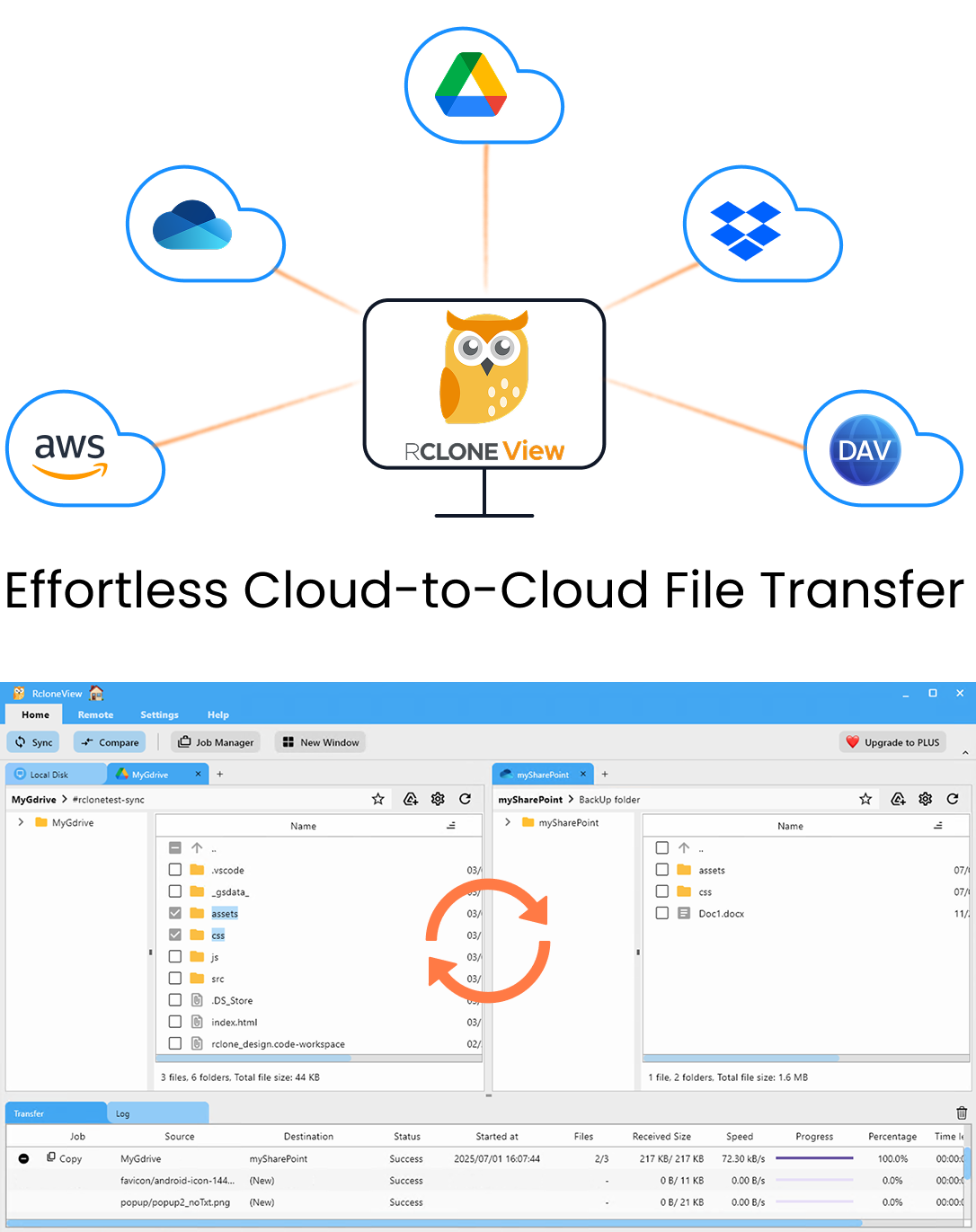

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

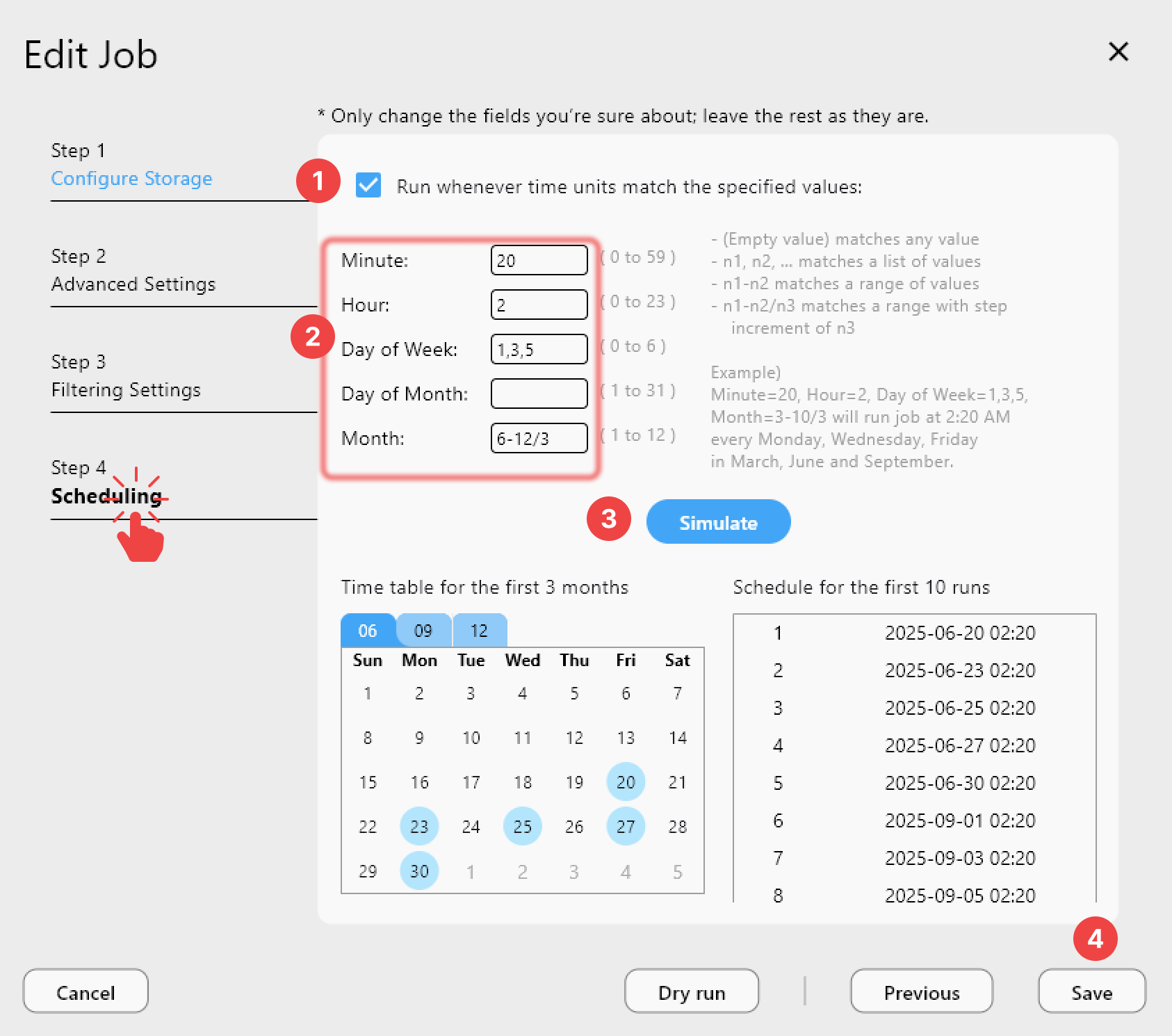

- Schedulers & history for reliable automation

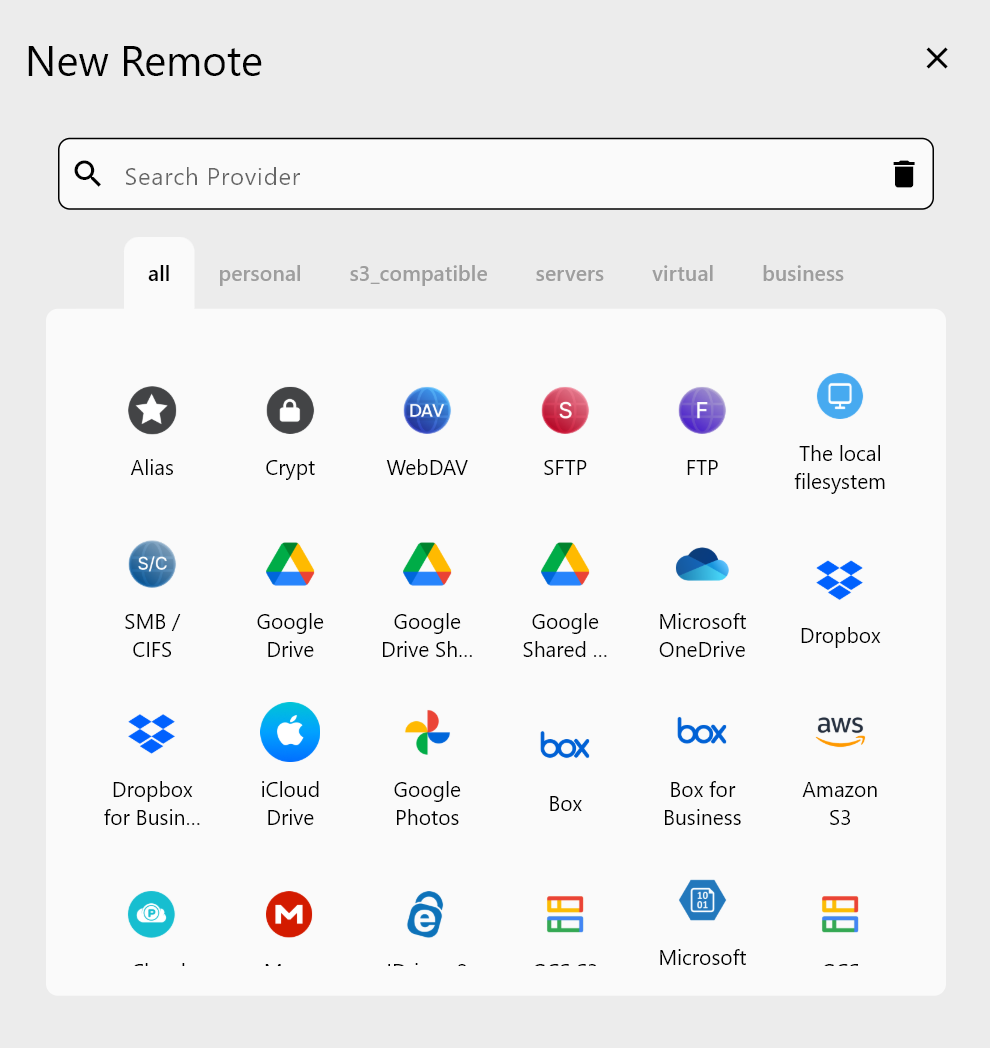

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Why Cloud Sync Timeouts Happen

Timeout errors occur when a cloud provider does not respond within the expected window. The root causes vary: an overloaded API endpoint, a congested network path, or a file that exceeds the provider's per-request time limit. Google Drive, for instance, may return a 408 Request Timeout when an upload chunk takes too long, while S3-compatible services return 504 Gateway Timeout under heavy load.

Large files amplify the problem. A single 10 GB upload can take minutes per chunk on a modest connection, and if the provider's idle timeout is shorter than the chunk transfer time, the request fails. Shared networks, VPN tunnels, and corporate proxies add latency that further shrinks the effective timeout margin.

RcloneView surfaces these errors clearly in its transfer log so you can distinguish a timeout from a permissions error or a quota issue, which is the first step toward a targeted fix.

Adjusting Timeout and Retry Settings

The most direct fix is increasing the low-level timeout values. In RcloneView's job configuration, you can set --timeout (default 5m) and --contimeout (default 1m) to higher values. For large-file workloads over slow links, setting --timeout 15m prevents premature disconnects during chunk uploads.

Equally important is the retry strategy. RcloneView lets you configure --retries (default 3) and --retries-sleep to add a backoff delay between attempts. A configuration of --retries 5 --retries-sleep 10s gives transient provider issues time to clear before the next attempt, dramatically improving completion rates on flaky connections.

For chunked uploads, reducing --drive-chunk-size or --s3-chunk-size means each individual request completes faster, staying well within the provider's timeout window. A 16 MB chunk on a 10 Mbps link takes roughly 13 seconds — safely inside most timeout thresholds.

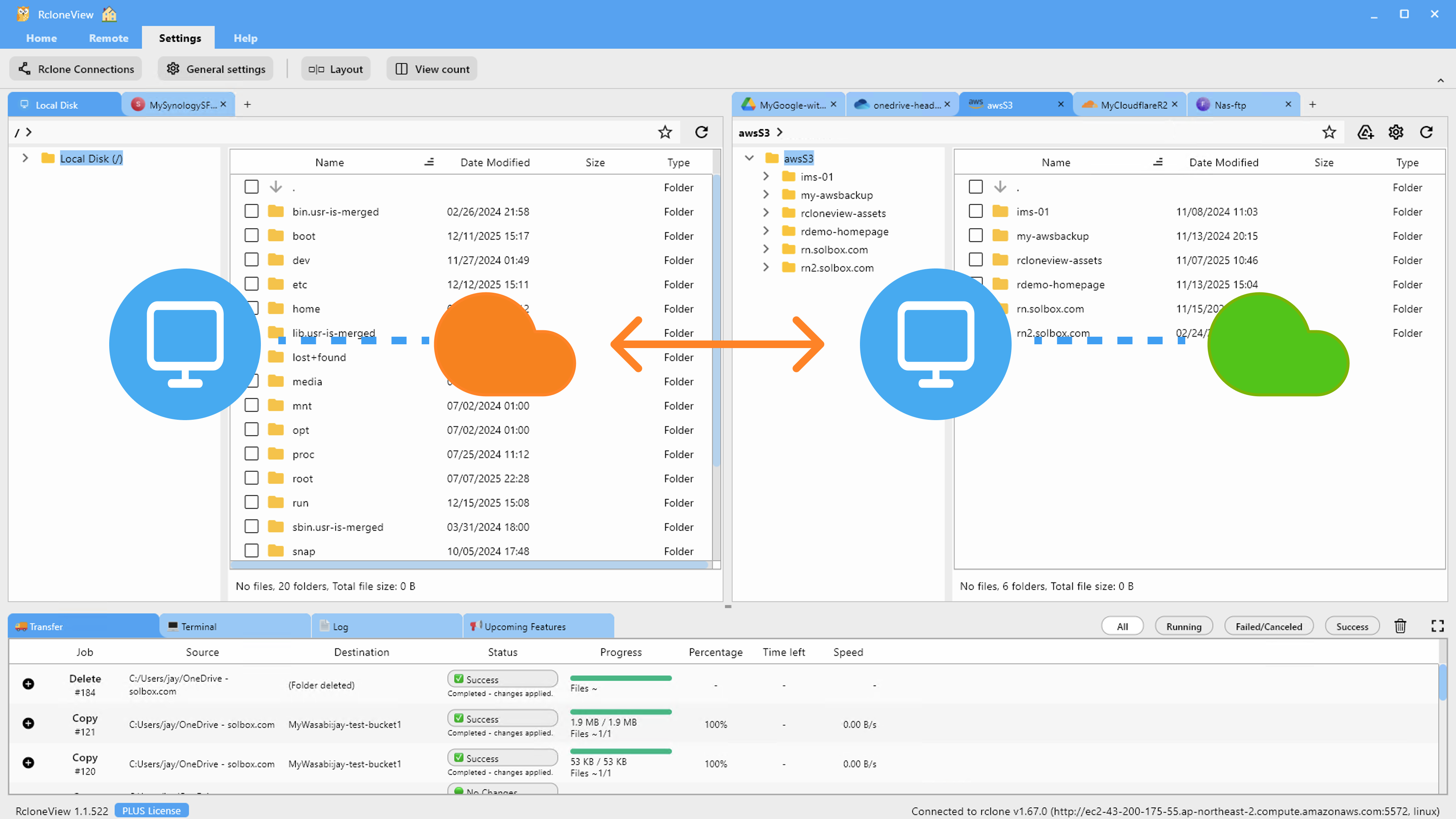

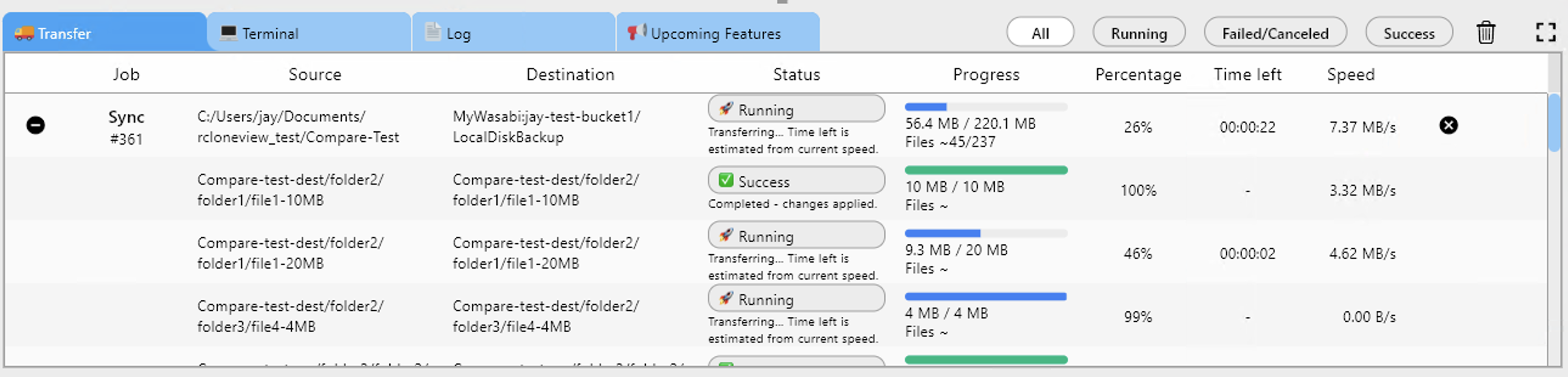

Monitoring Transfers in Real Time

RcloneView's real-time transfer dashboard shows per-file progress, current speed, and elapsed time. When a transfer stalls, you see it immediately rather than waiting for a timeout to expire. This visibility lets you cancel and restart a stuck file before it triggers a cascade of retry failures.

The job history panel records every timeout event with timestamps and error codes. Over time, patterns emerge — timeouts clustering at certain hours may indicate provider-side maintenance windows, while consistent failures on files above a specific size point to chunk-size tuning opportunities.

Combining real-time monitoring with scheduled retries means you can set a sync job to run overnight and review the results in the morning, confident that transient timeouts were handled automatically.

Preventing Timeouts with Bandwidth Management

Saturating your upload bandwidth increases latency on the same connection, which can trigger timeouts on subsequent requests. RcloneView's --bwlimit flag lets you cap bandwidth — for example, --bwlimit 80M on a 100 Mbps link — leaving headroom for TCP acknowledgments and API round-trips.

You can also limit concurrent transfers with --transfers. Reducing from the default 4 to 2 on a constrained link means each transfer gets more bandwidth, completing chunks faster and avoiding idle-timeout thresholds.

Getting Started

- Download RcloneView from rcloneview.com.

- Open your sync job settings and increase

--timeoutto 10m or 15m for large transfers. - Set retries to 5 with a 10-second sleep interval to handle transient provider errors.

- Reduce chunk size if individual upload requests are timing out on slower connections.

With the right timeout, retry, and bandwidth settings, cloud sync failures become a thing of the past.

Related Guides:

- Fix Slow Cloud Transfers — Speed Up Sync with RcloneView

- Fix Cloud Sync Stuck or Hanging — Resolve Stalled Transfers with RcloneView

- Resume Failed Cloud Transfers — Recover Interrupted Syncs with RcloneView