Fix Cloud Sync Creating Duplicate Files — How to Resolve with RcloneView

Cloud sync that produces duplicate files signals a specific configuration mistake — RcloneView's job type settings and Folder Compare make it straightforward to diagnose, clean up, and prevent recurrence.

Cloud sync operations that produce duplicate files — copies with the same name but different modification timestamps, or files with suffixes like -copy or (1) — compound storage costs with every run and signal an underlying configuration problem. RcloneView uses rclone's deterministic sync engine, and when duplicates appear, the root cause is almost always job type mismatch, conflicting operations, or bidirectional sync conflicts.

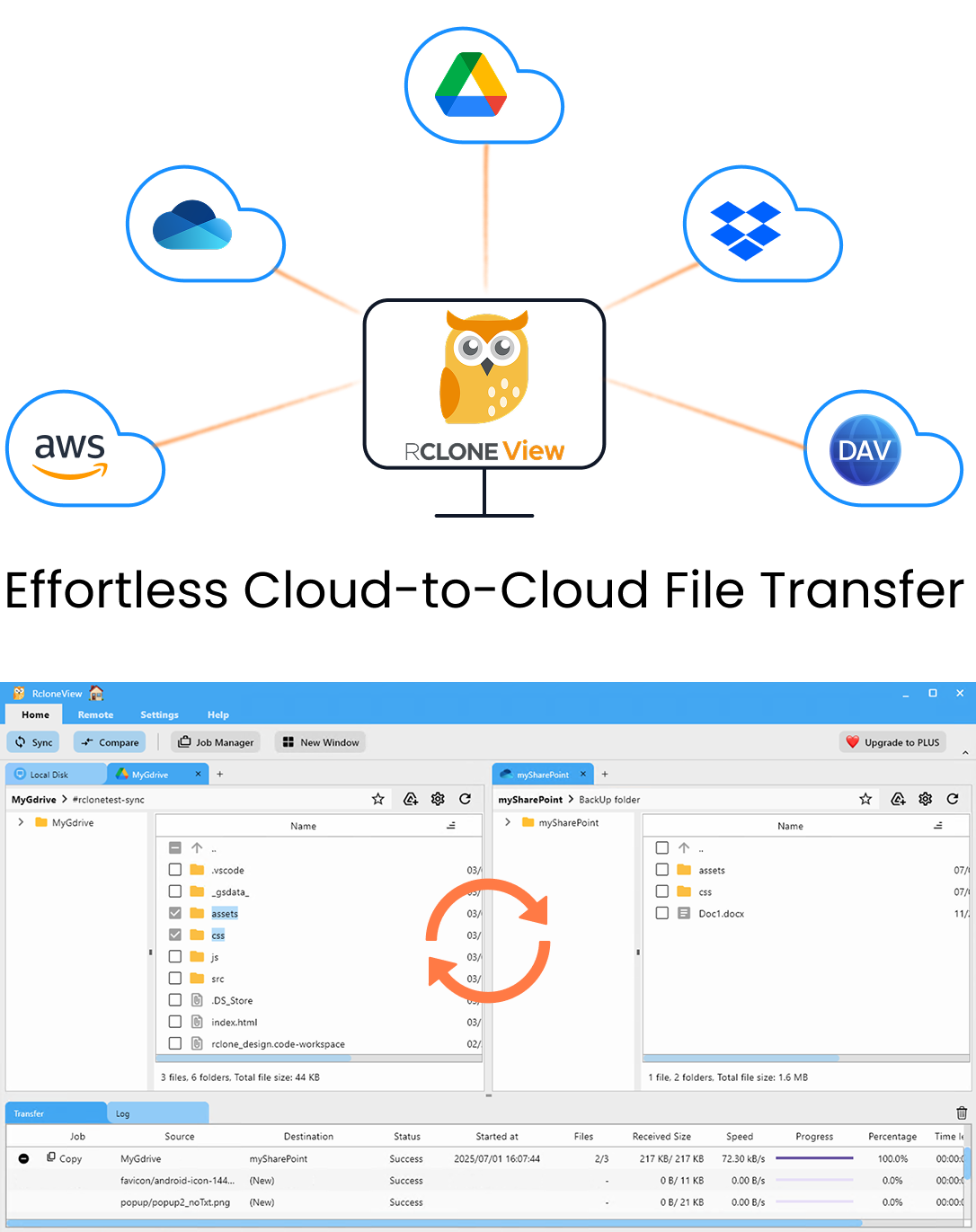

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

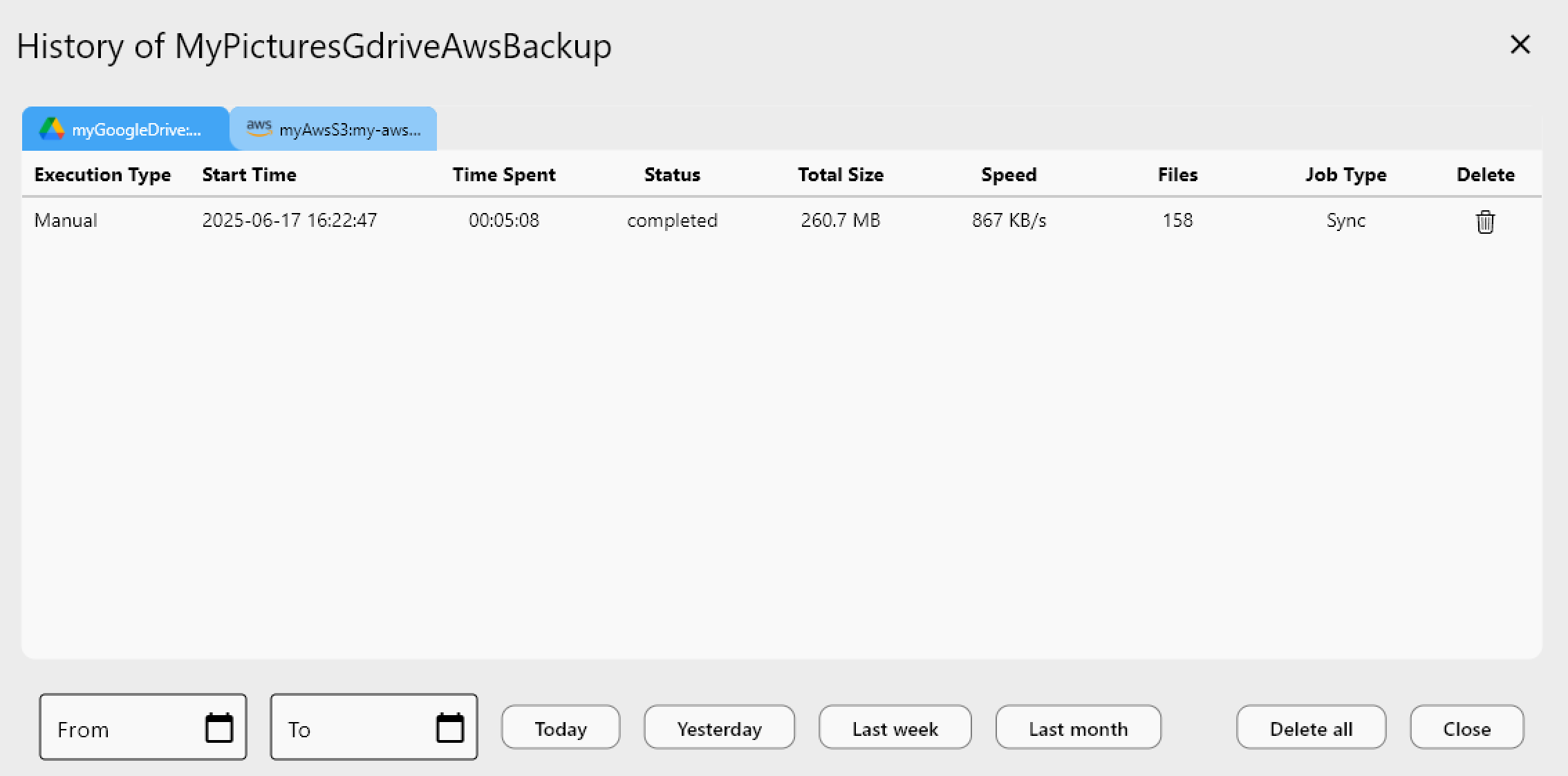

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Why Sync Produces Duplicates

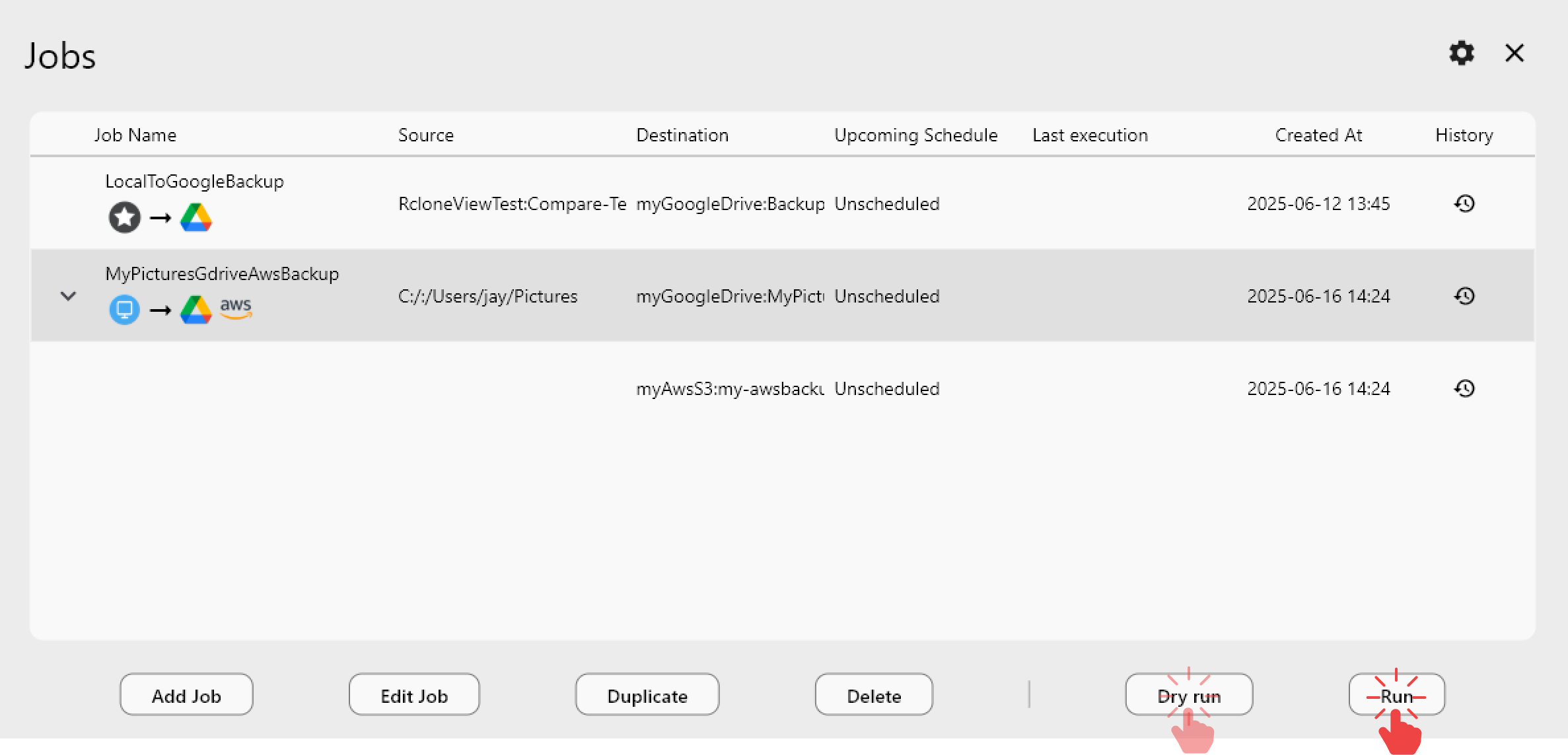

The most frequent cause: using Copy job type where Sync should be used. A Copy job always adds files at the destination — if the destination already has files from a previous run, a second copy creates duplicates with newer timestamps or appended suffixes. Switching to the Sync job type in Job Manager ensures the destination mirrors the source exactly: extra files at the destination are removed, and only differences are transferred.

RcloneView's Bidirection sync mode (currently Beta) can produce conflict copies when both sides modify the same file between sync runs. Rclone creates a conflict copy on one side to preserve both versions. For production workflows, one-way sync (the default "Modifying destination only" mode) prevents this entirely — use bidirectional only when genuinely needed.

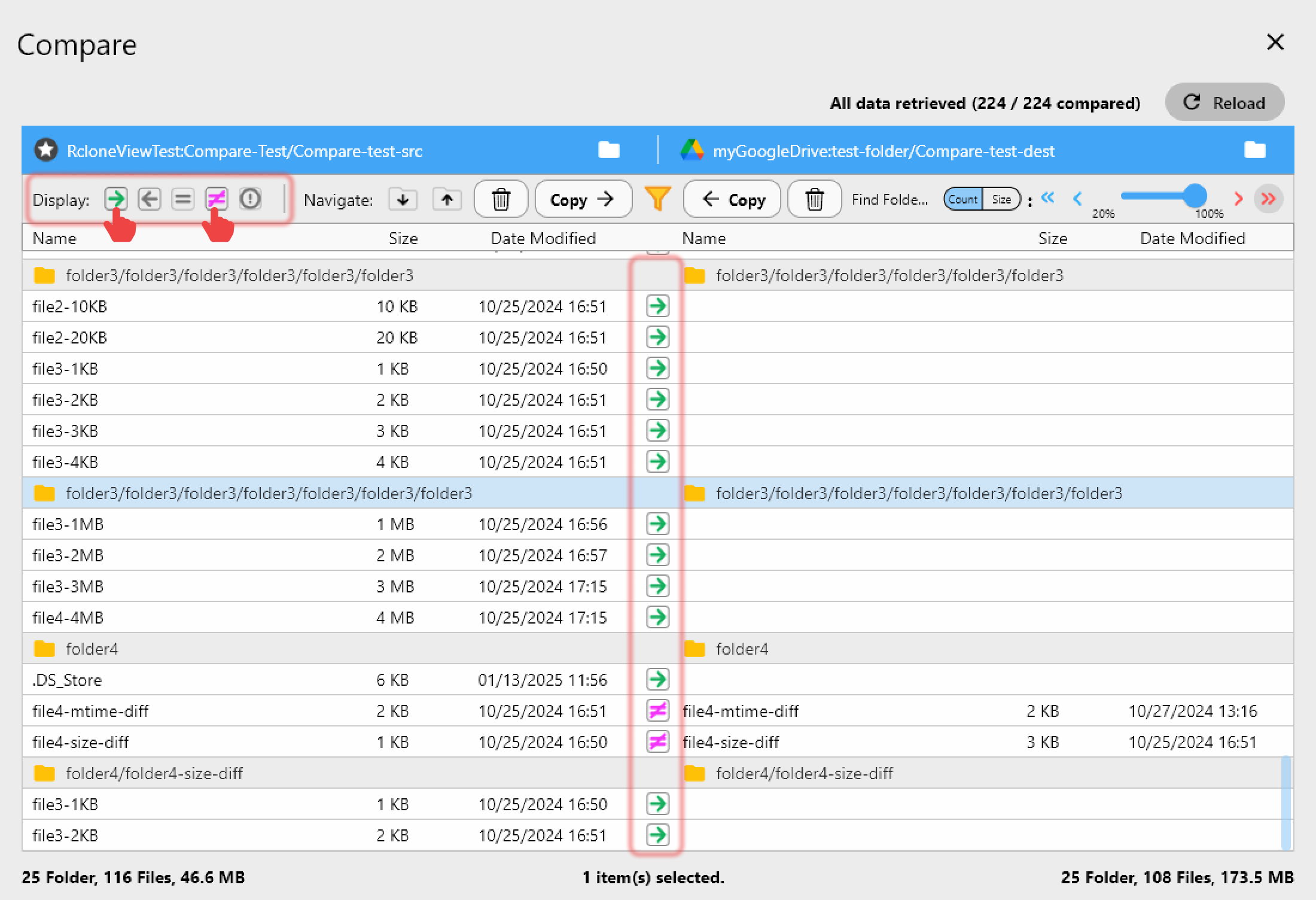

Finding and Removing Existing Duplicates

Use RcloneView's Folder Compare to identify files with identical names but different content existing across two locations. The "different files" filter highlights files where size doesn't match, while the "same files" filter confirms exact matches. Files that appear on both sides but shouldn't be duplicated can be removed from one side using Folder Compare's delete action.

For cleaning up large numbers of existing duplicates within a single cloud, RcloneView's built-in Terminal tab lets you run rclone dedupe with appropriate deduplication strategy flags — identifying files with identical content regardless of name and keeping only one copy. The terminal provides full rclone CLI access without leaving the RcloneView interface.

Configuring Sync to Prevent Recurrence

In Job Manager, verify these settings for clean, duplicate-free sync behavior:

- Use Sync job type for mirror operations (not Copy)

- Set sync direction to "Modifying destination only" (one-way) for stable behavior

- Enable Dry Run before the first run on a new destination to verify the operation list

Enabling checksum comparison in Advanced Settings makes the sync more precise — files are compared by both hash and size rather than just timestamp and size, preventing cases where files with identical sizes but different content are treated as equal.

Getting Started

- Download RcloneView from rcloneview.com.

- Identify duplicate-producing jobs in Job Manager — switch Copy jobs to Sync where appropriate.

- Use Folder Compare to find and remove existing duplicates between source and destination.

- Enable Dry Run before running jobs on new destinations to verify behavior before committing.

Duplicate files in cloud sync are a solvable configuration problem — the right job type and sync direction settings in RcloneView prevent them from appearing in the first place.

Related Guides:

- Find and Remove Duplicate Files in Cloud Storage with RcloneView

- Sync vs Copy vs Move — Difference Explained with RcloneView

- Dry Run — Preview Sync Before Transfer in RcloneView