Fix Connection Timeout on Large File Uploads — Solve with RcloneView

Large file uploads to cloud storage fail with timeout errors more often than small files. Here's how to diagnose the root cause and configure RcloneView to handle multi-GB transfers reliably.

Uploading a 20 GB video archive or a 50 GB database dump to cloud storage is fundamentally different from syncing a folder of documents. Large files stress connection stability, exhaust default timeout thresholds, and expose multipart chunking limitations that small-file transfers never encounter. RcloneView exposes the rclone flags you need to tune these parameters — through Global Rclone Flags and per-job settings — without requiring you to write shell scripts.

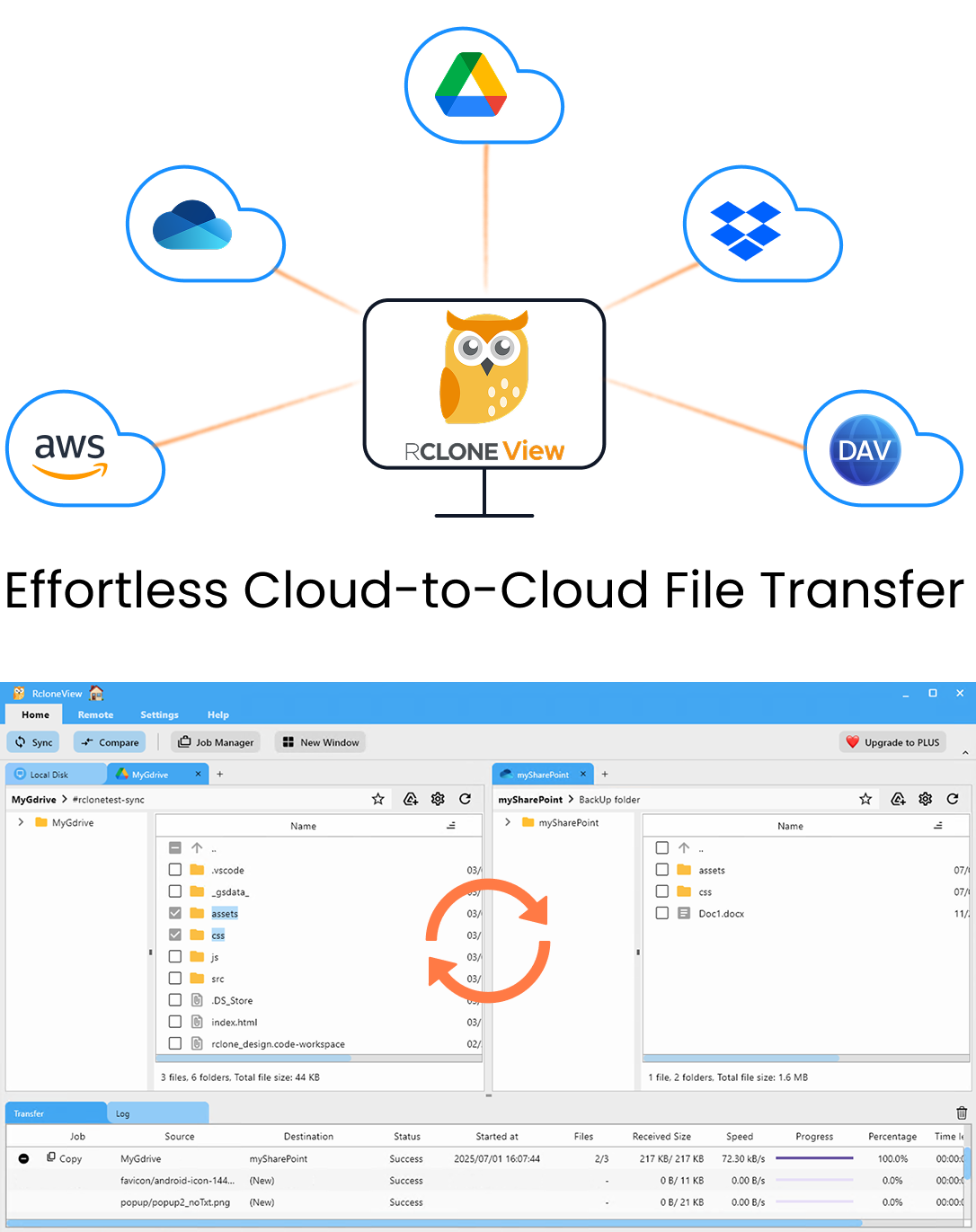

Manage & Sync All Clouds in One Place

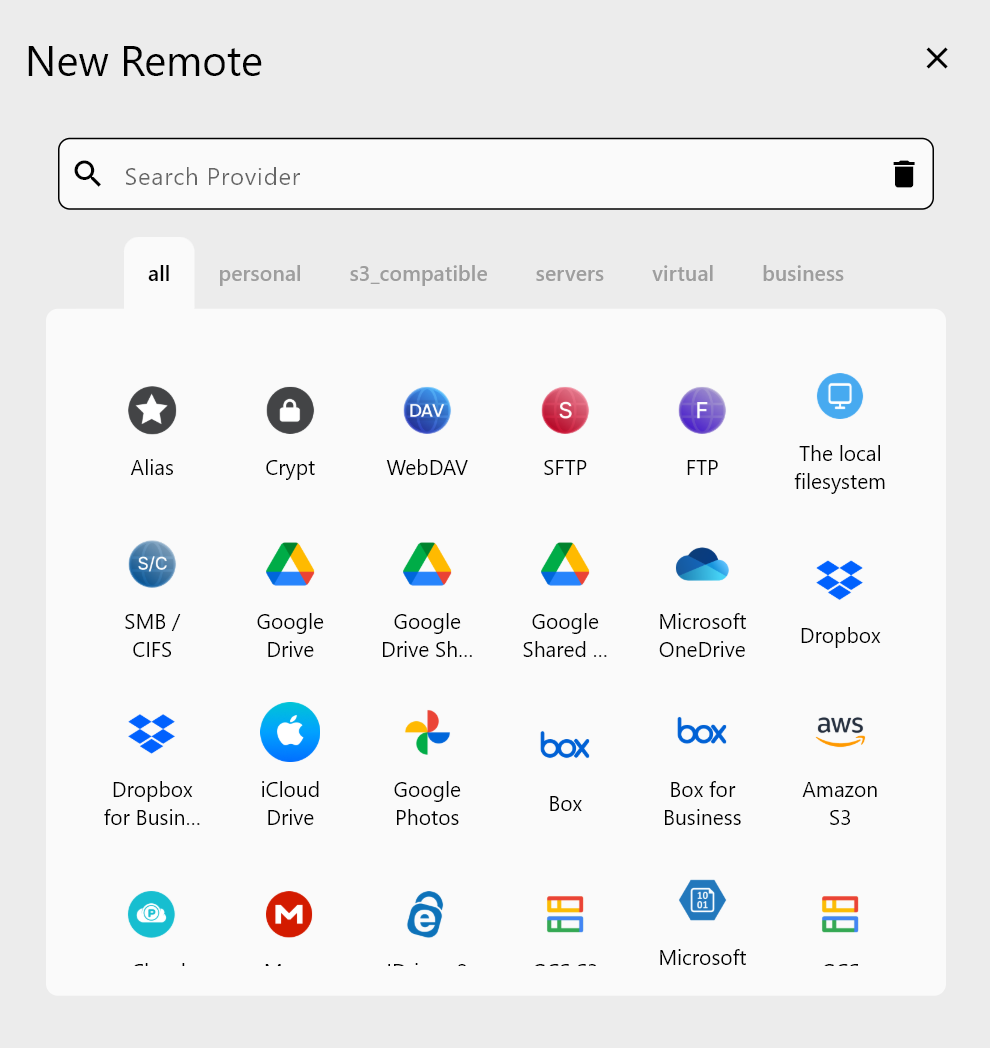

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

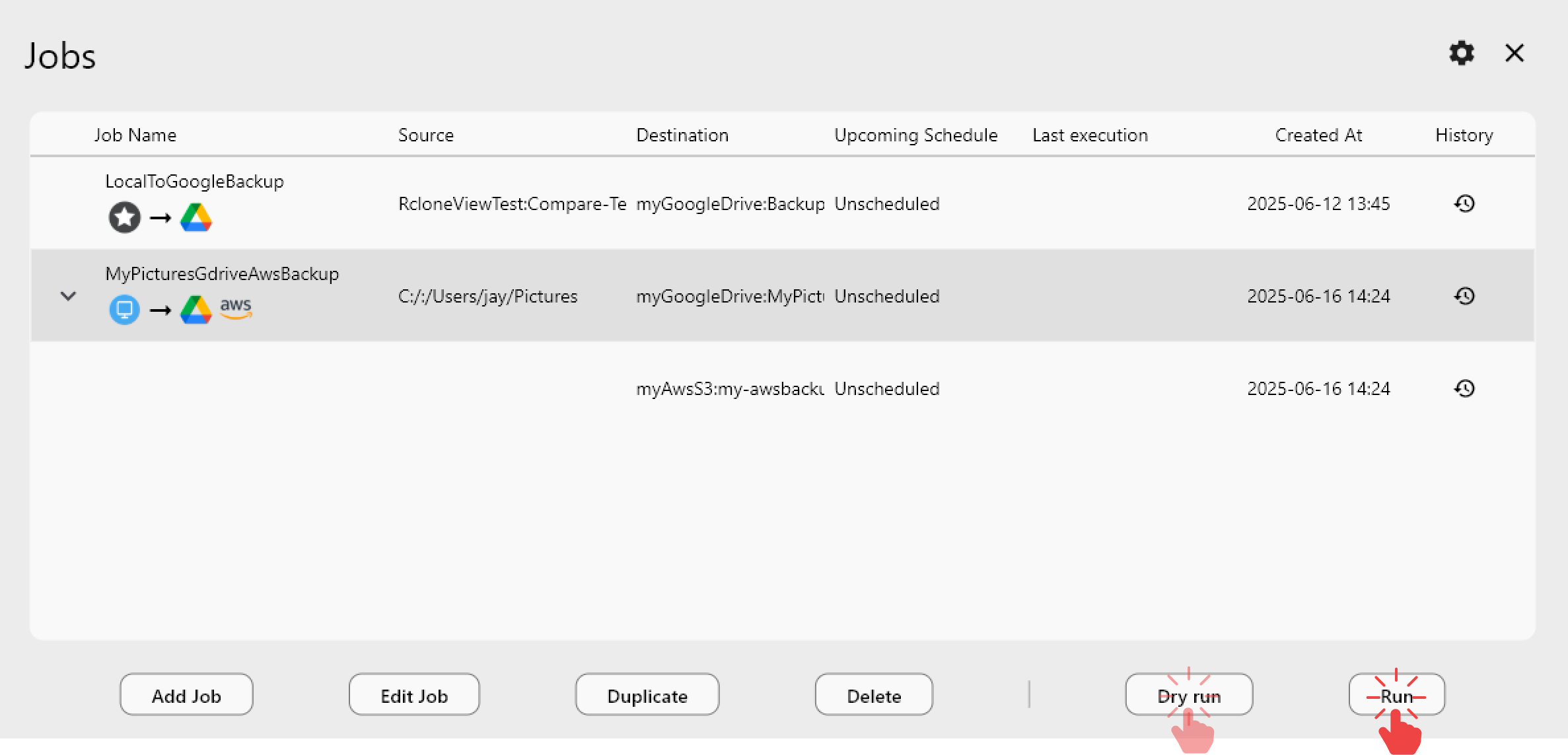

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Recognizing Timeout Errors in RcloneView

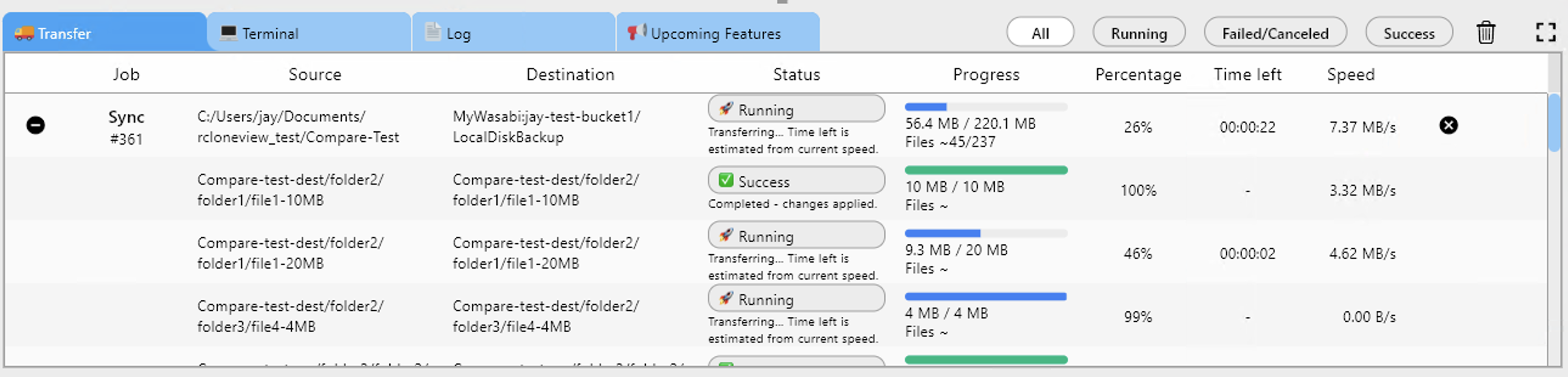

When a large file upload times out, RcloneView's Log tab shows entries like Failed to copy: net/http: request canceled (Client.Timeout exceeded) or RequestTimeout: Your socket connection to the server was not read from or written to within the timeout period. The Transferring tab shows the affected file stalling at a partial percentage before the job reports an error.

Connection timeouts during large uploads are most common on:

- S3-compatible providers with strict part upload time windows

- Cloud APIs that close idle connections after 30–60 seconds

- Network paths with intermittent packet loss inflating round-trip latency

Adjusting Chunk Size and Timeout Flags

The most effective fix for large-file timeout errors is adjusting the chunk size for multipart uploads. In RcloneView, go to Settings → Embedded Rclone → Global Rclone Flags and add:

--s3-chunk-size 128M— increases S3 multipart chunk size from the 5 MB default to 128 MB, reducing the number of API round-trips per file--s3-upload-cutoff 200M— sets the file size threshold above which multipart uploads are used--timeout 5m— extends the global connection timeout to 5 minutes per operation

For Google Drive, use --drive-chunk-size 128M instead of the S3 flag. For Backblaze B2, use --b2-chunk-size 128M.

Enabling Retries and Transfer Resumption

Enable Retry entire sync if fails in the sync wizard's Step 2 (set to 3 or 5 retries). Rclone's retry logic attempts to resume multipart uploads where they left off for S3-compatible providers, minimizing wasted transfer time. For providers that don't support resumable uploads (like basic WebDAV), retries restart the file but the overall job continues without re-transferring already-completed files.

Reduce concurrent transfers for large-file jobs. Setting Number of file transfers to 2–4 reduces peak bandwidth demand and connection slot contention, which is the underlying cause of many timeout errors on congested networks.

Getting Started

- Download RcloneView from rcloneview.com.

- Check the Log tab for timeout error messages after a failed large-file upload.

- Add

--s3-chunk-size 128Mand--timeout 5mto Global Rclone Flags in Settings. - Set concurrent transfers to 2–4 and enable 3–5 retries in the sync job wizard.

With the right chunk size and retry configuration, RcloneView handles multi-GB uploads reliably — even on imperfect network connections.

Related Guides:

- Upload Large Files to Google Drive Sync with RcloneView

- Fix Slow Cloud Uploads — Speed Optimization with RcloneView

- Fix S3 Multipart Upload Failures with RcloneView