Migrate MEGA to AWS S3 with RcloneView: Step-by-Step Guide

Moving from MEGA to Amazon S3 means shifting from consumer-grade encrypted storage to enterprise-grade object storage — but MEGA's bandwidth limits make the migration tricky. RcloneView gives you a visual, manageable way to plan, execute, and verify the entire migration.

MEGA is a popular cloud storage service known for its generous free tier and built-in end-to-end encryption. However, as your storage needs grow — or as you move toward professional infrastructure — Amazon S3's scalability, durability (99.999999999% or "eleven nines"), fine-grained access controls, and ecosystem integrations make it a compelling destination.

The catch is that MEGA imposes bandwidth limits on downloads, which means you cannot simply pull everything out at once. A successful migration requires planning, patience, and the right tooling. RcloneView provides the visual interface, scheduling, and monitoring capabilities to manage this process from start to finish without touching the command line.

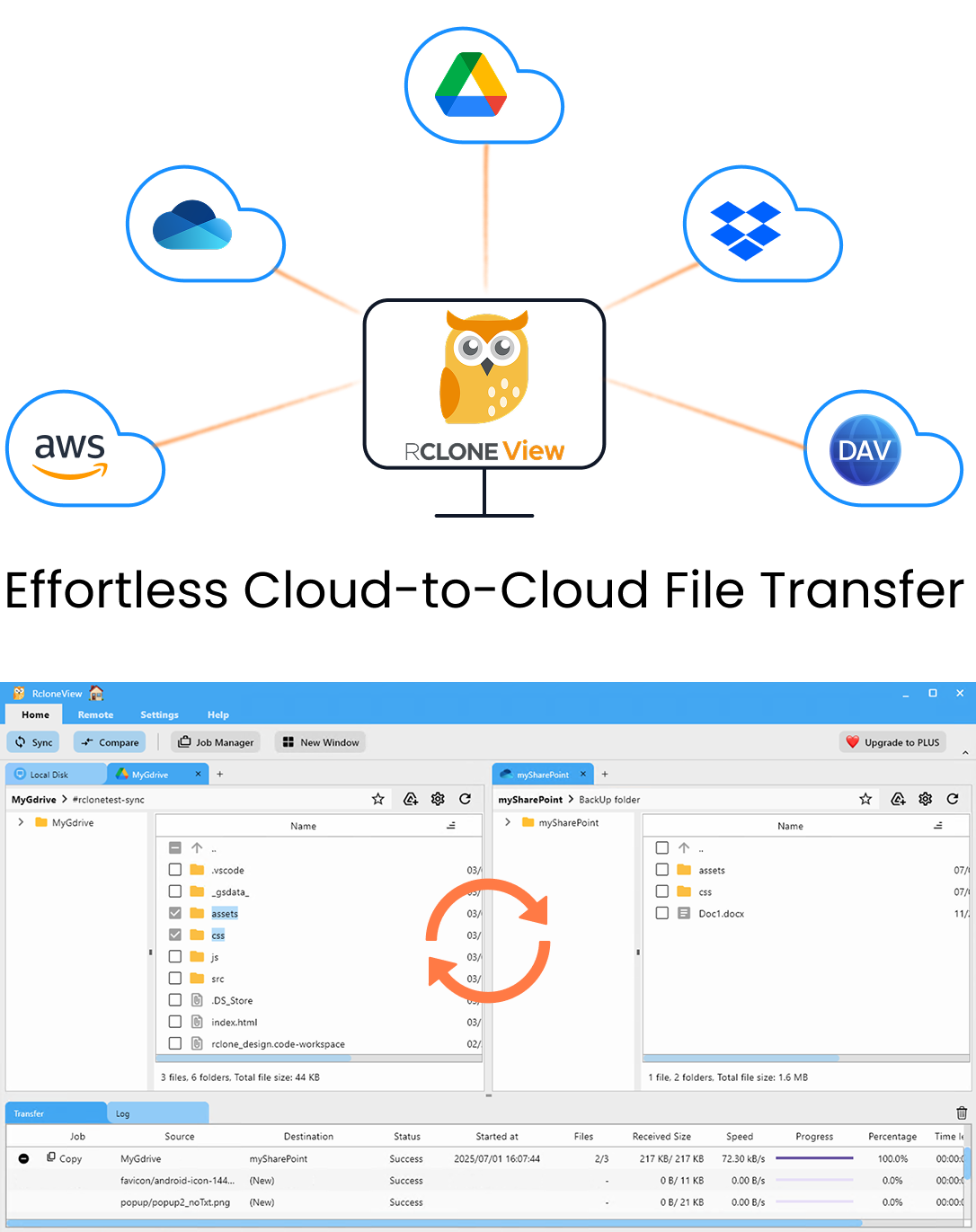

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Why Migrate from MEGA to Amazon S3

Before diving into the how, it helps to understand the why. Common reasons for this migration include:

- Scalability — S3 handles petabytes without performance degradation. MEGA plans cap out at fixed storage tiers.

- Durability and availability — S3 offers 99.999999999% durability and configurable availability across regions and availability zones.

- Access controls — IAM policies, bucket policies, and presigned URLs give you granular control over who can access what.

- Ecosystem integration — S3 works natively with AWS Lambda, CloudFront, Athena, and thousands of third-party tools.

- Storage classes — S3 Glacier, Glacier Deep Archive, Intelligent-Tiering, and other classes let you optimize costs based on access patterns.

- Compliance — S3 supports compliance certifications (HIPAA, SOC, PCI-DSS) that many organizations require.

Planning Your Migration

A successful MEGA-to-S3 migration starts with a plan. Here is what to consider:

Inventory Your MEGA Storage

Before transferring anything, understand what you have:

- Total storage used — know the volume of data you need to move.

- Folder structure — decide whether to replicate MEGA's directory layout on S3 or reorganize during migration.

- File types and sizes — large media files will take longer per file; millions of small files will take longer due to API overhead.

Understand MEGA's Bandwidth Limits

MEGA imposes download bandwidth limits that vary by account type:

- Free accounts have a limited transfer quota that resets periodically (typically every few hours).

- Pro accounts have higher quotas, but they are still finite per period.

This means you may not be able to download your entire library in one session. Plan for a migration that runs in stages over days or even weeks, depending on your data volume and account tier.

Prepare Your S3 Bucket

On the AWS side, create and configure your target bucket before starting:

- Create an S3 bucket in your preferred AWS region.

- Configure access — create an IAM user or role with

s3:PutObject,s3:GetObject, ands3:ListBucketpermissions. - Choose a storage class — Standard for frequently accessed files, Intelligent-Tiering for mixed access patterns, or Glacier for archival data.

- Enable versioning (optional but recommended) to protect against accidental overwrites during migration.

Setting Up Both Remotes in RcloneView

With your plan in place, configure both cloud connections in RcloneView.

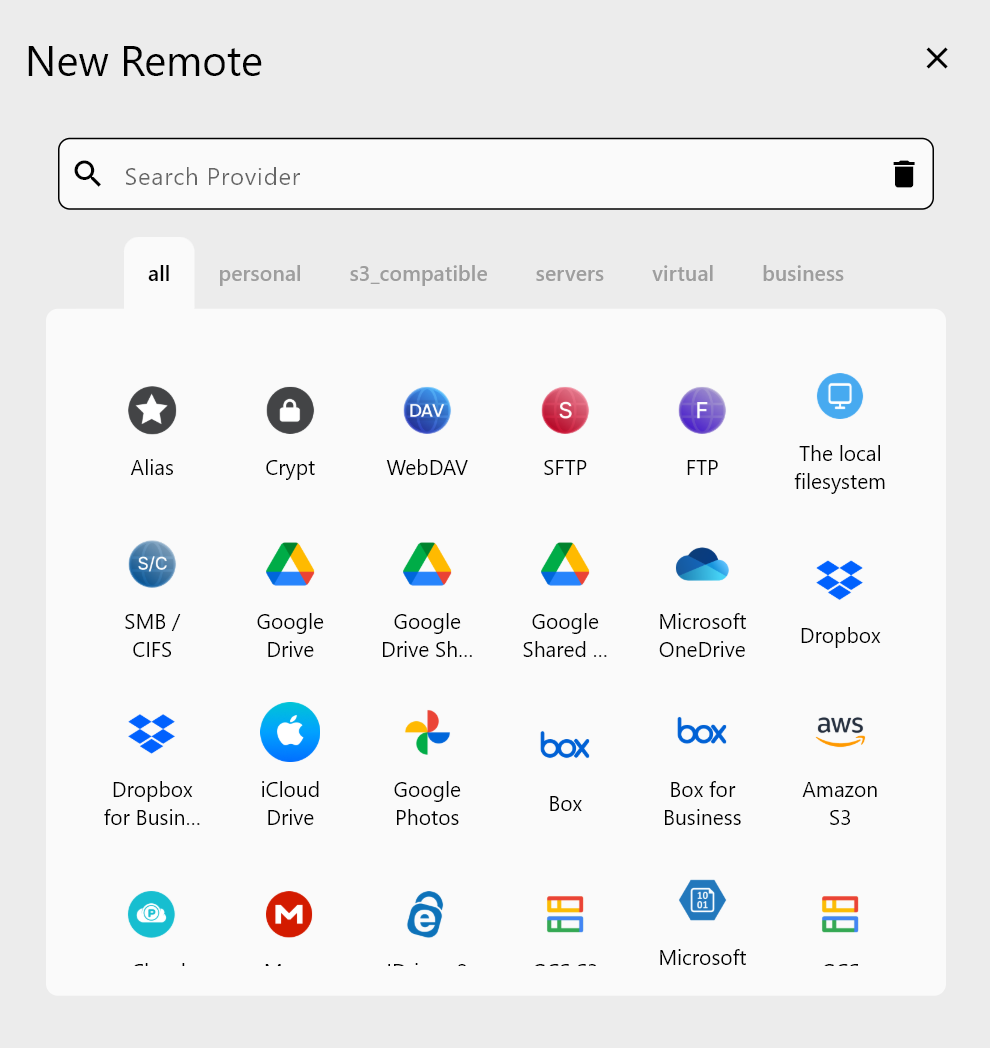

Adding the MEGA Remote

- Open RcloneView and click + New Remote.

- Select MEGA from the provider list.

- Enter your MEGA email address and password.

- Name the remote (e.g.,

MyMEGA) and save.

Adding the Amazon S3 Remote

- Click + New Remote again.

- Select Amazon S3 from the provider list.

- Enter your AWS Access Key ID and Secret Access Key.

- Specify the region and bucket name.

- Name the remote (e.g.,

MyS3) and save.

Both remotes will now appear in RcloneView's Explorer, ready for browsing and transfers.

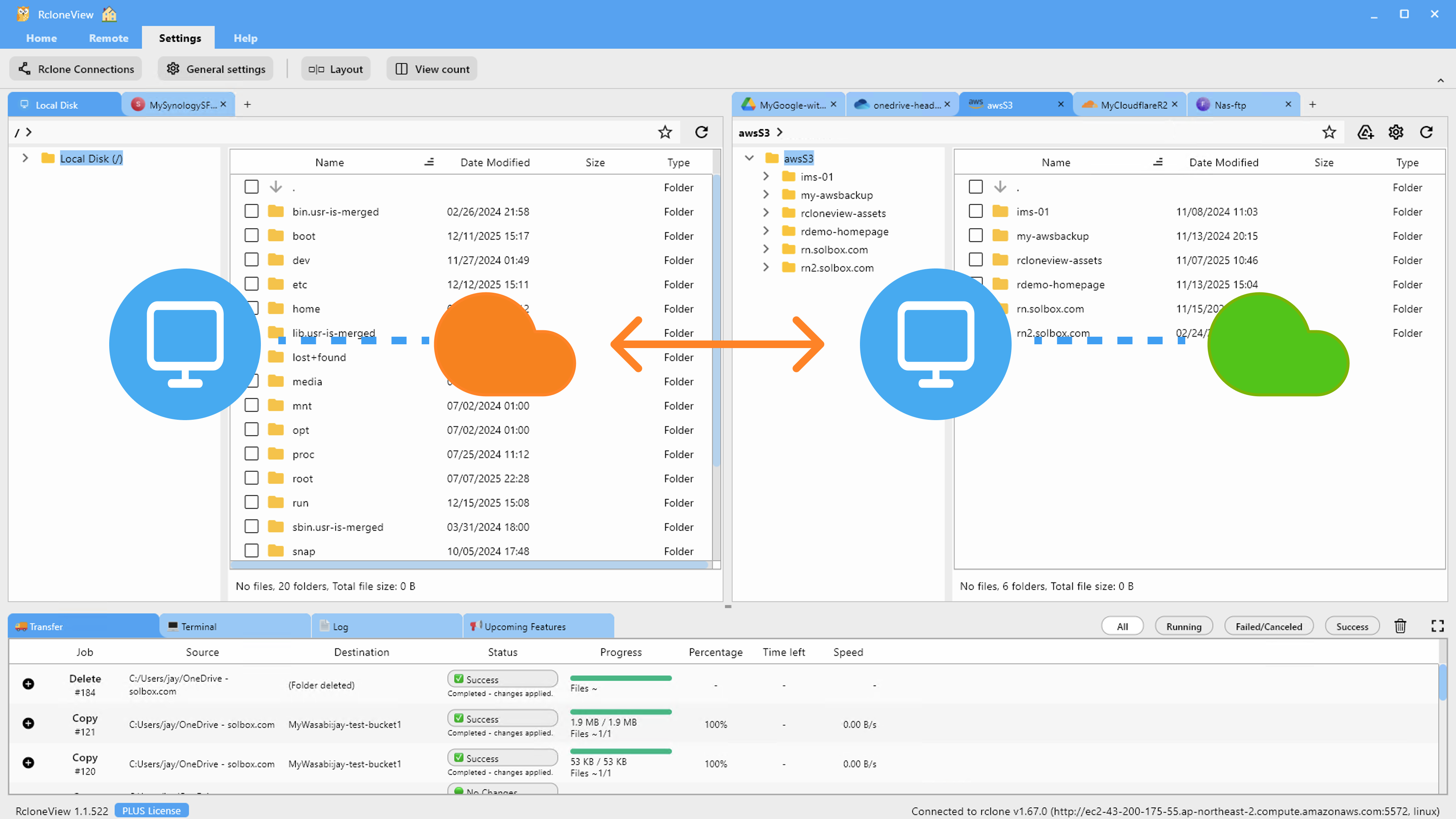

Running the Migration

With both remotes configured, open MEGA in one Explorer pane and S3 in the other. You now have a visual overview of source and destination side by side.

Step 1: Start with a Test Transfer

Before migrating everything, test with a small folder:

- Select a folder in the MEGA pane that contains a mix of file types and sizes.

- Drag it to the S3 pane, targeting your desired destination path.

- Monitor the transfer in RcloneView's progress panel.

- Verify the files appear correctly in S3 with expected sizes.

This confirms that both remotes are configured correctly and that transfers flow as expected.

Step 2: Create a Migration Job

For the full migration, create a formal job:

- Set up a Copy job from MEGA (source) to S3 (destination).

- Configure the source path (root

/for everything, or specific folders). - Configure the destination path on S3.

- Run a Dry Run first to see what will be transferred without actually moving data.

Step 3: Execute in Stages

Due to MEGA's bandwidth limits, you may need to run the migration in stages:

- Start the copy job. RcloneView will begin transferring files.

- When MEGA's bandwidth limit is reached, the transfer will slow down or pause. You will see errors or slowdowns in the monitoring panel.

- Wait for the quota to reset (typically a few hours for free accounts, less for Pro).

- Re-run the same copy job. RcloneView will skip files that were already transferred successfully and resume with the remaining files.

- Repeat until all files are migrated.

This incremental approach is one of the biggest advantages of using RcloneView for MEGA migrations. Each run picks up where the last one left off, so you never re-transfer data unnecessarily.

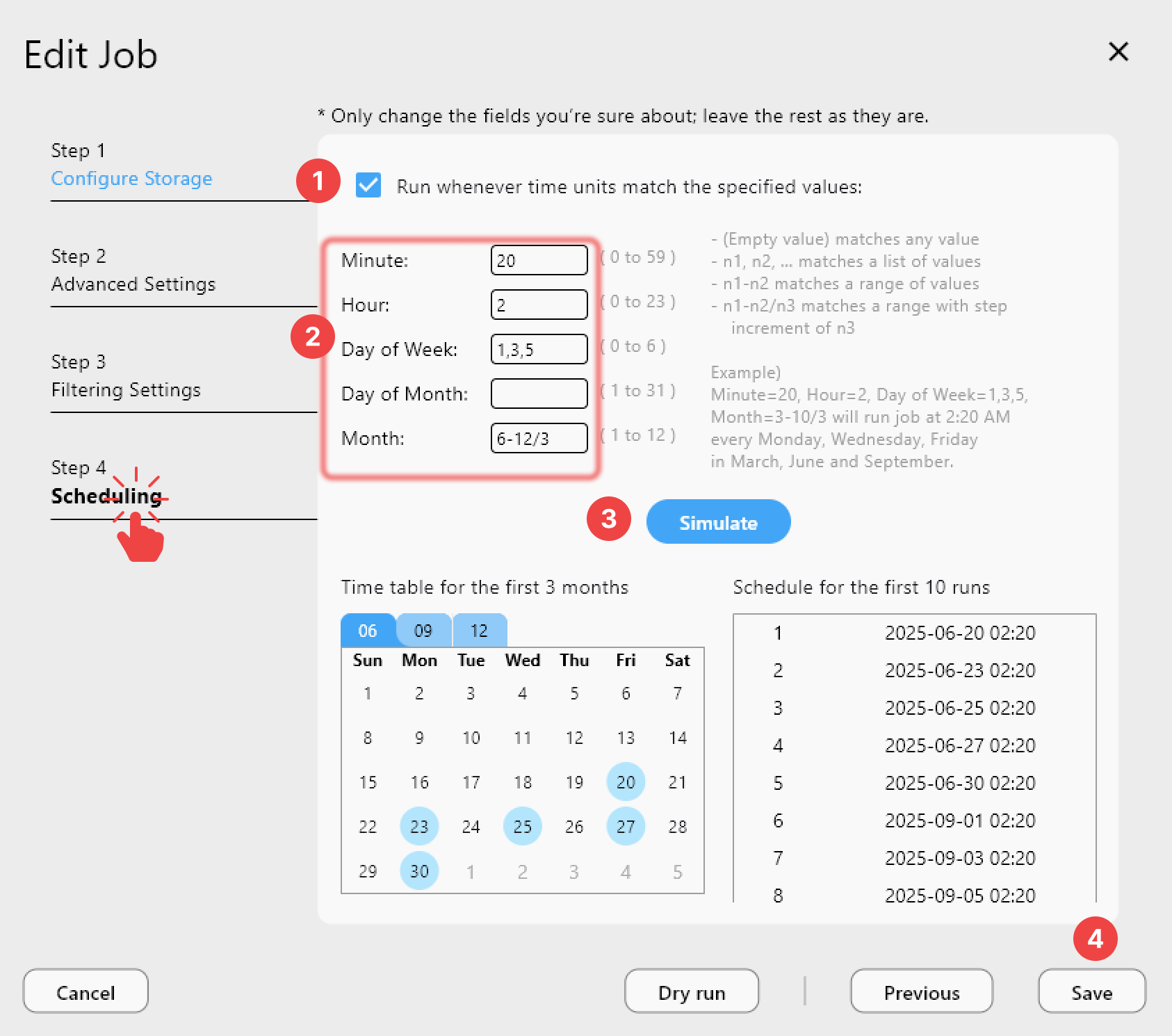

Scheduling the Migration Over Time

If your MEGA library is large, manually re-running the job every few hours becomes tedious. Use RcloneView's job scheduling to automate it:

- Create the Copy job as described above.

- Open the Job Scheduling panel.

- Set the job to run every 6-8 hours (or whatever interval aligns with your MEGA quota reset cycle).

- Enable the schedule.

RcloneView will automatically attempt the transfer at each interval. Files already on S3 are skipped, so each run only processes the remaining data. Over several days, your entire MEGA library will arrive on S3.

Verifying Migration Integrity

After all files have been transferred, verify that nothing was missed or corrupted:

Folder Comparison

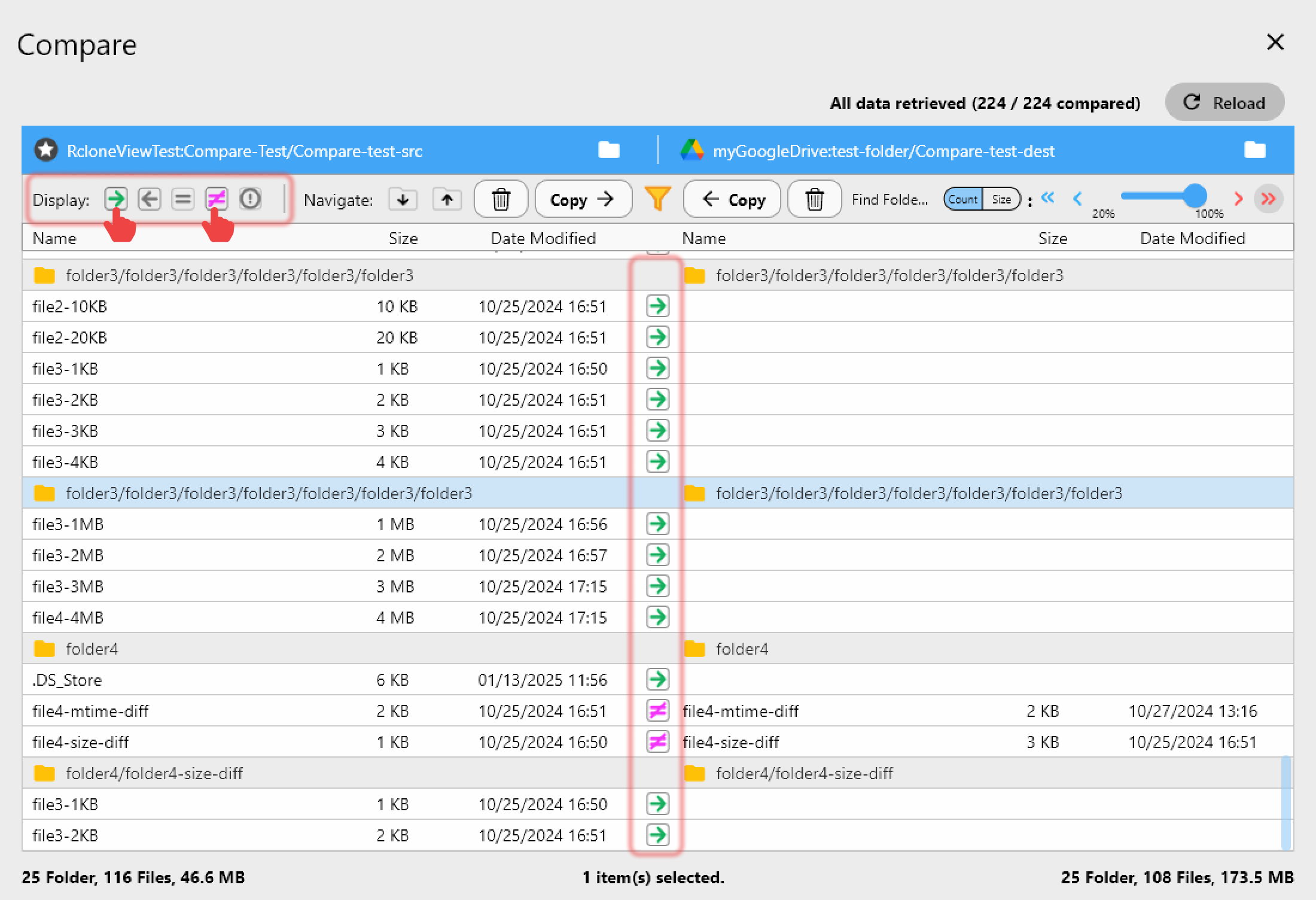

Use RcloneView's Compare feature to check MEGA against S3:

- Open MEGA in one pane and S3 in the other.

- Navigate to matching directories.

- Run a folder comparison.

- Review the results — look for files that exist on MEGA but not on S3 (missed transfers) or files with different sizes (potential corruption).

Check File Counts and Sizes

Spot-check several directories to confirm:

- The number of files on S3 matches MEGA.

- File sizes are consistent (note that MEGA uses encryption, so sizes reported by MEGA and S3 may differ slightly in metadata views, but actual content should match).

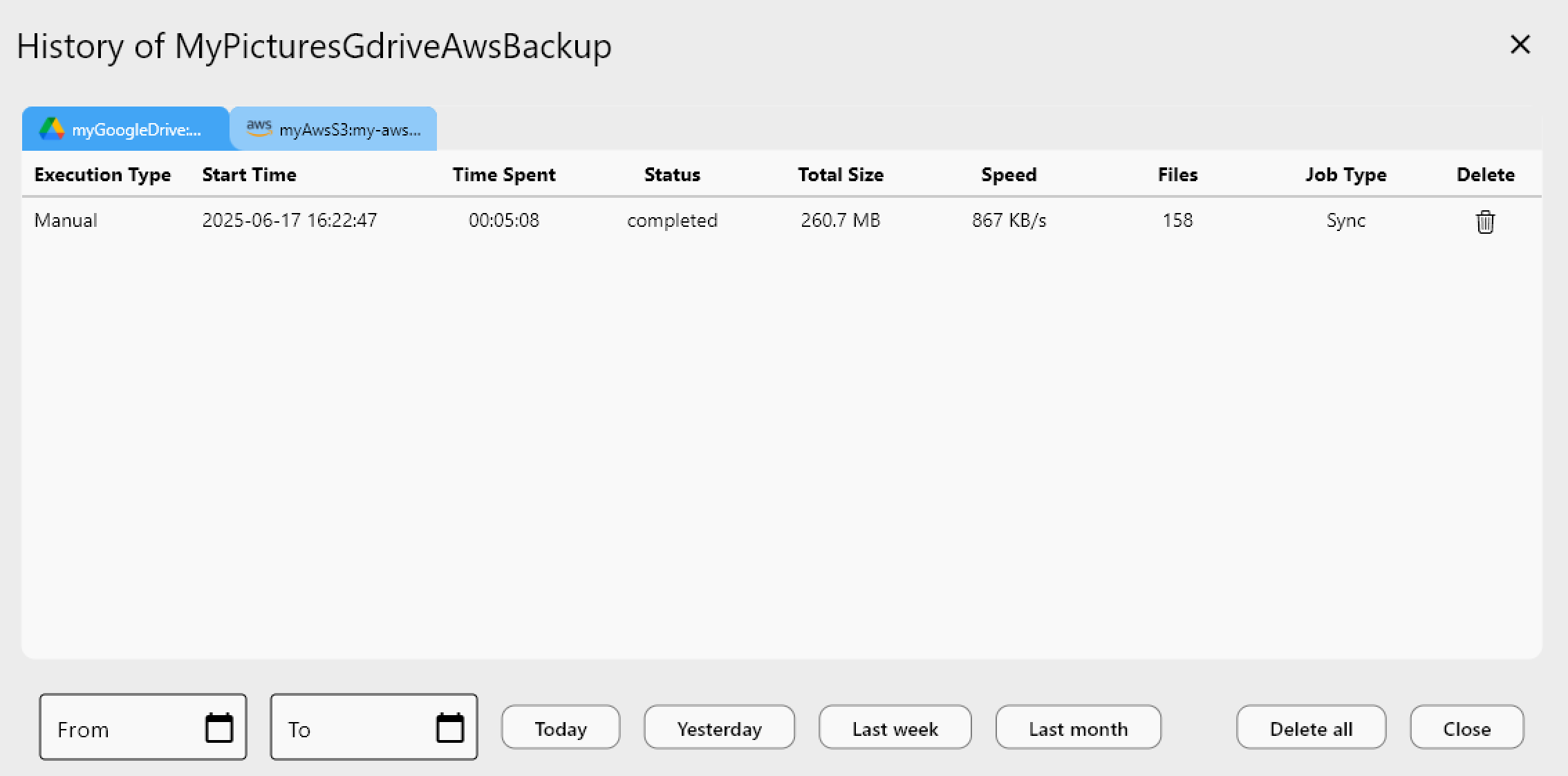

Review Job History

Check the Job History panel in RcloneView. Look for:

- Any runs that reported errors.

- Runs where the transferred file count was zero (indicating the migration may be complete).

- Any skipped files that need attention.

Handling Common Issues

MEGA Bandwidth Exhausted

If you see transfer errors related to bandwidth or quota, this is MEGA's download limit in action. Wait for the quota to reset and re-run the job. RcloneView will resume from where it stopped.

Large Files Timing Out

Very large files (multiple gigabytes) may fail if they cannot complete within MEGA's quota window. Solutions:

- Upgrade your MEGA plan temporarily for higher bandwidth.

- Transfer large files individually during off-peak hours when you have the most quota available.

S3 Permission Errors

If files fail to upload to S3, verify your IAM user has the correct permissions. At minimum you need s3:PutObject on the target bucket and prefix.

Duplicate File Names

MEGA allows file names that may conflict with S3 naming conventions. Watch for special characters, very long paths, or case-sensitivity issues (S3 keys are case-sensitive, but some MEGA folders may have case-insensitive duplicates).

Getting Started

- Download RcloneView from rcloneview.com.

- Connect MEGA using your account credentials in the New Remote wizard.

- Connect Amazon S3 with your AWS access keys and bucket details.

- Plan, execute, and verify — migrate at MEGA's pace, monitored and managed visually.

MEGA got you started. S3 takes you further. RcloneView bridges the gap.

Related Guides: