Fix S3 Access Denied and Permission Errors with RcloneView

"Access Denied" from an S3-compatible storage provider almost always means a permissions misconfiguration — not a bug. This guide walks through every common cause and its fix, from IAM policies to bucket ACLs to credential typos.

S3 permission errors are frustrating because they're often opaque: the API returns 403 Access Denied without explaining which specific permission is missing. The problem could be IAM policy, bucket policy, bucket ACL, object ACL, encryption settings, or simply wrong credentials. RcloneView surfaces these errors clearly in job history — this guide helps you trace them to their source.

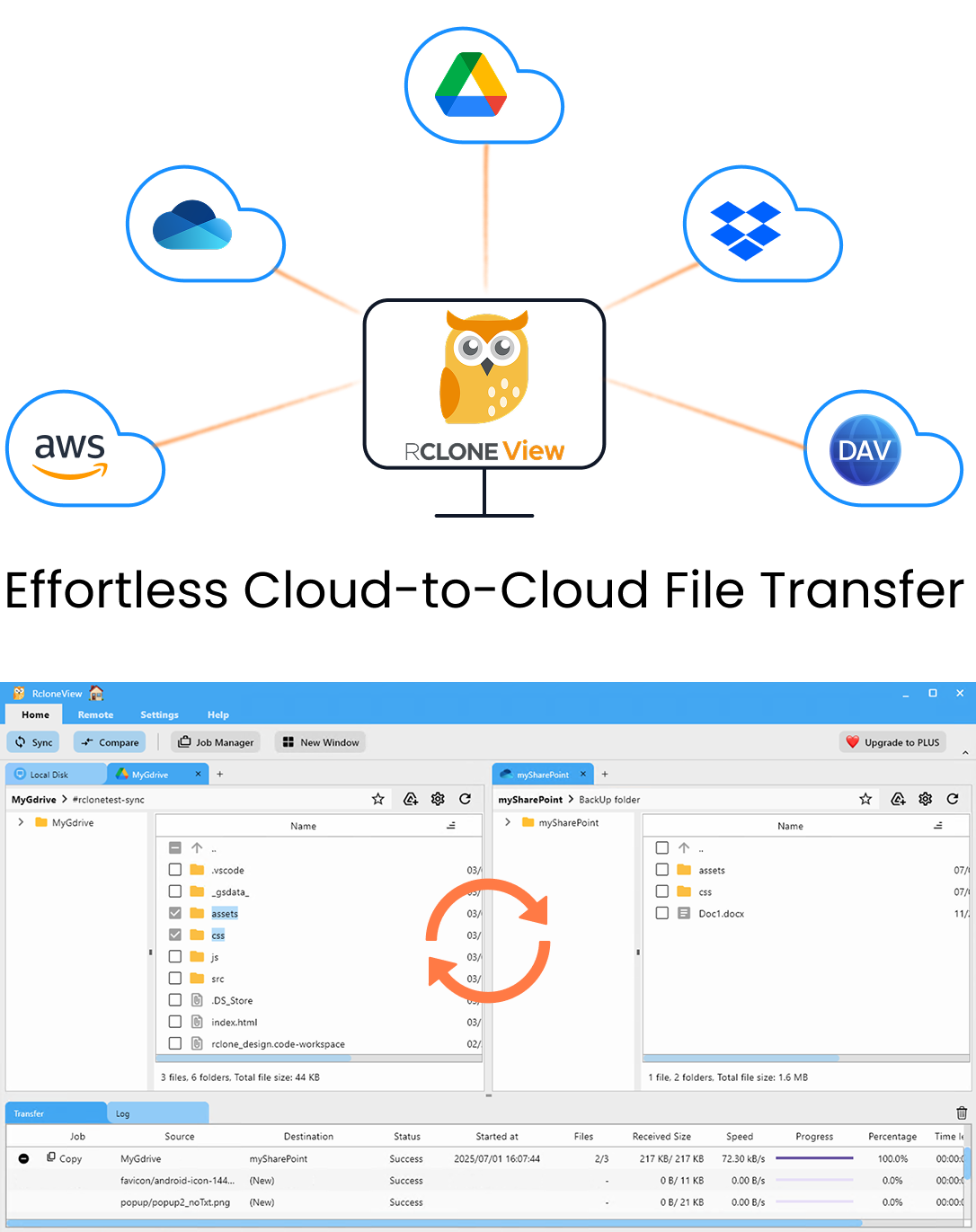

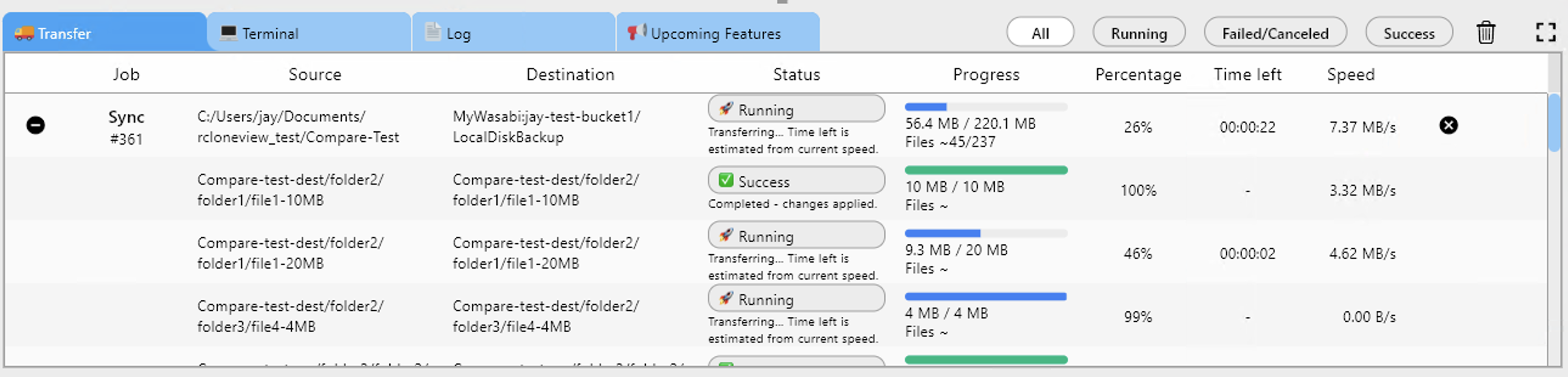

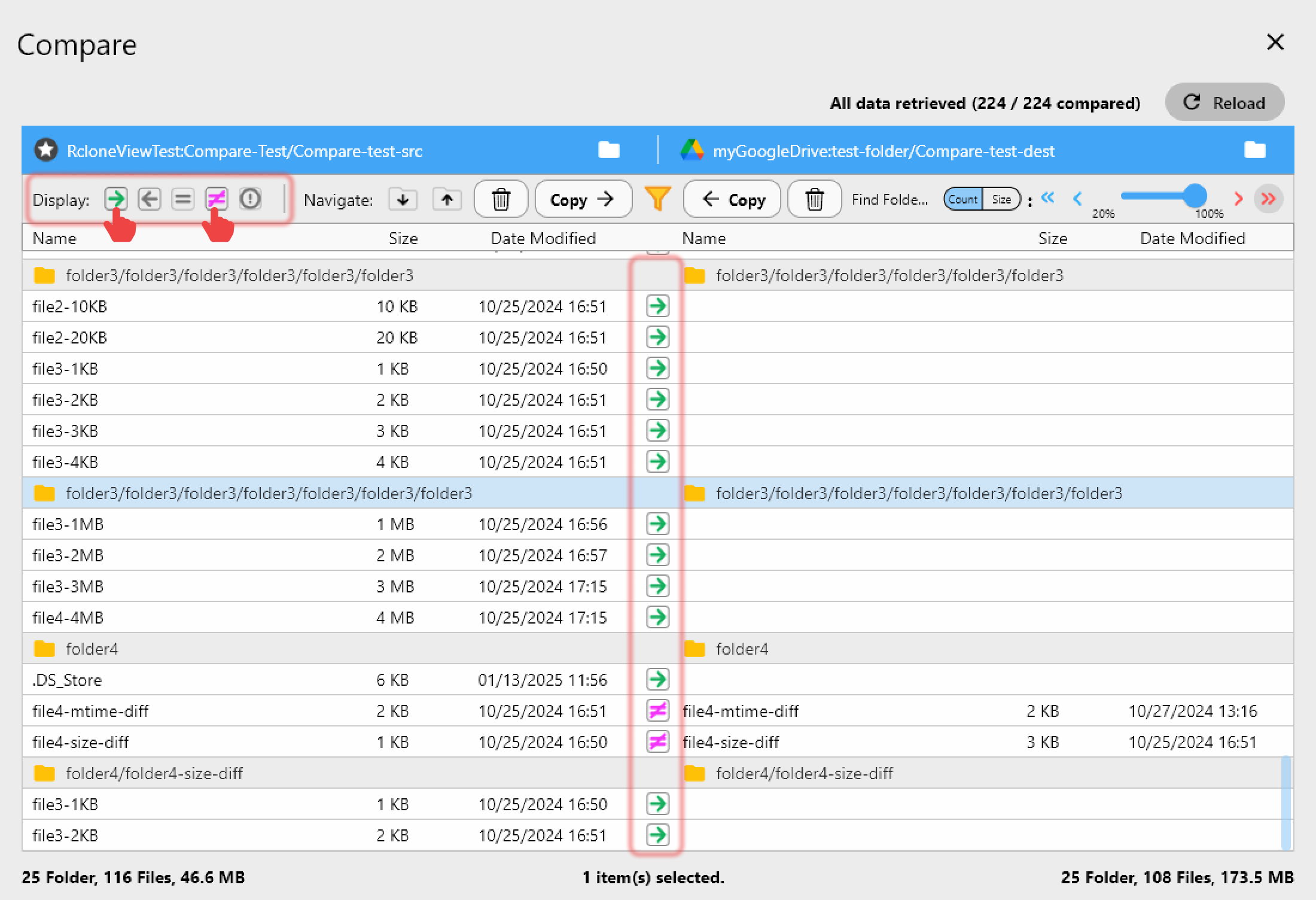

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

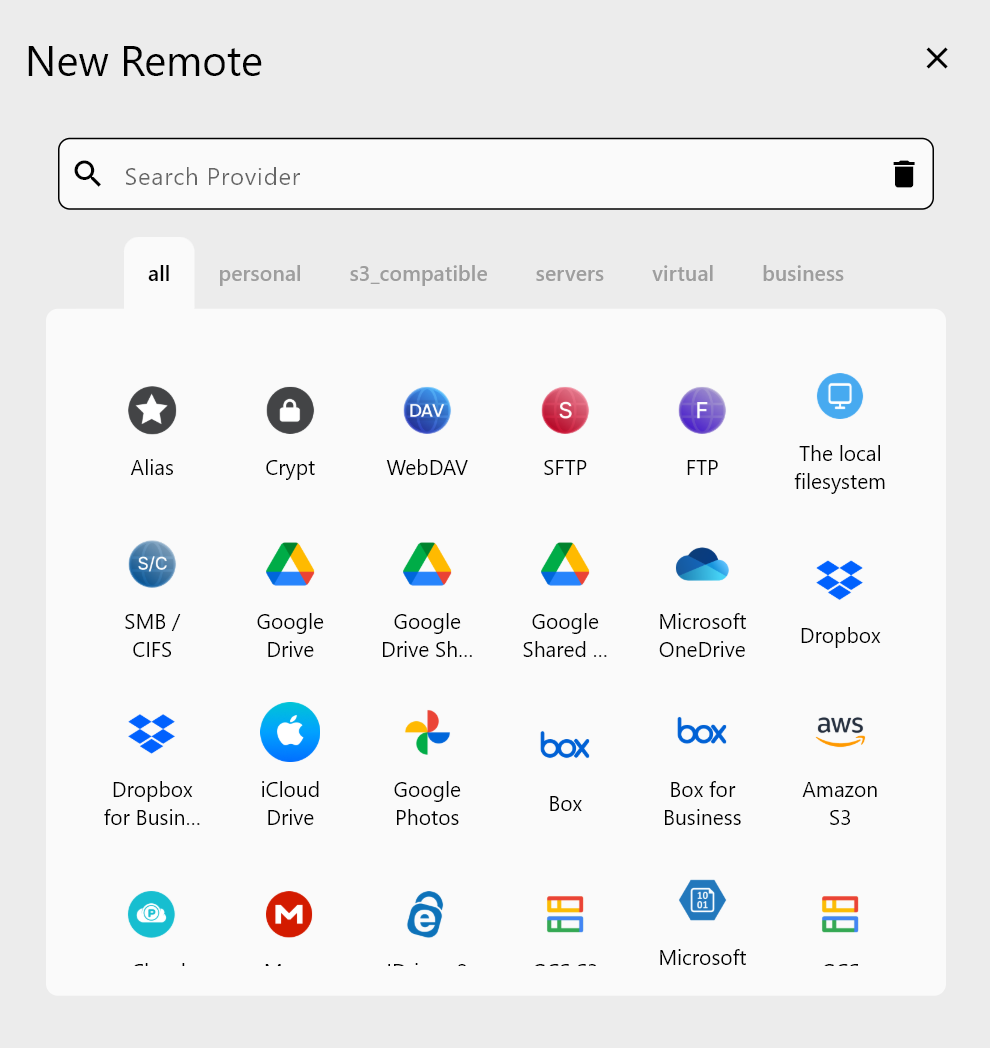

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Diagnosing the Error

The first step is reading the exact error message in RcloneView's job history or terminal output:

Common error patterns and what they indicate:

| Error Message | Likely Cause |

|---|---|

AccessDenied: Access Denied | IAM/bucket policy; wrong credentials |

403 Forbidden | Bucket policy or ACL block |

NoCredentialProviders: no valid credentials | Credentials not configured |

InvalidAccessKeyId | Wrong access key or typo |

SignatureDoesNotMatch | Wrong secret key |

AllAccessDisabled: All access to this object has been disabled | S3 Block Public Access settings |

AccountProblem | AWS account issue (billing, suspension) |

Fix 1: Wrong or Missing Credentials

The most common cause of AccessDenied is simply wrong credentials in the RcloneView remote configuration.

Check your credentials:

- Open Remotes in RcloneView.

- Select the S3 remote and click Edit.

- Verify the Access Key ID and Secret Access Key match exactly what's in your AWS IAM console (or equivalent provider console).

- Re-paste the keys if in doubt — invisible whitespace is a common typo source.

For Wasabi, IDrive e2, and other S3-compatible providers, also verify that the Endpoint URL matches the provider's current endpoint for your region.

Fix 2: Insufficient IAM Permissions

If credentials are correct, the IAM user or role likely lacks the necessary S3 permissions.

Minimum permissions for RcloneView to function:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:ListBucket",

"s3:GetBucketLocation",

"s3:GetObject",

"s3:PutObject",

"s3:DeleteObject",

"s3:GetObjectAcl",

"s3:PutObjectAcl"

],

"Resource": [

"arn:aws:s3:::your-bucket-name",

"arn:aws:s3:::your-bucket-name/*"

]

}

]

}

Attach this policy to the IAM user or role that RcloneView uses. For listing all buckets, also add s3:ListAllMyBuckets on Resource: "*".

Fix 3: Bucket Policy Blocking Access

A bucket policy can override IAM permissions. Check the bucket policy in the AWS console:

- Navigate to S3 → Your Bucket → Permissions → Bucket Policy.

- Look for any

Denystatements that might apply to your IAM user. - Also check Block Public Access settings — if you're trying to set public ACLs on objects, these settings will block it.

A common mistake is a catch-all Deny statement that accidentally blocks your IAM user:

{

"Effect": "Deny",

"Principal": "*",

"Action": "s3:*",

"Condition": {

"Bool": { "aws:SecureTransport": "false" }

}

}

This is actually a valid HTTPS-enforcement policy — rclone uses HTTPS by default, so this shouldn't cause issues unless you've explicitly forced HTTP.

Fix 4: Object-Level ACL Issues

Some S3 configurations enforce that uploaded objects use a specific ACL (bucket-owner-full-control in cross-account setups). If you're uploading to someone else's bucket and they get Access Denied when reading your uploads:

Add --s3-acl bucket-owner-full-control in RcloneView's Custom flags field for the job.

Fix 5: Server-Side Encryption (SSE) Requirements

Some buckets require that objects be uploaded with a specific encryption key (SSE-KMS). Uploading without the key results in Access Denied.

In RcloneView's job custom flags:

--s3-sse aws:kms --s3-sse-kms-key-id arn:aws:kms:us-east-1:123456789:key/your-key-id

Fix 6: MFA Delete or Object Lock

If Object Lock or MFA Delete is enabled on the bucket, certain operations (delete, overwrite) are blocked without additional authentication steps. For read-only jobs (Copy, not Sync), this doesn't matter. For Sync jobs that need to delete orphaned files, you'll need either:

- A user with elevated permissions and MFA, or

- A job mode that doesn't delete (Copy instead of Sync).

Fix 7: Region Mismatch

Connecting to an S3 bucket in us-west-2 via the us-east-1 endpoint sometimes returns Access Denied. Make sure your remote's endpoint or region matches the bucket's actual region.

In RcloneView, edit the remote and set the Region to the correct value (e.g., us-west-2).

Checklist Summary

Work through this checklist in order:

- ✅ Credentials (access key and secret key) are copied correctly with no typos

- ✅ IAM user/role has ListBucket, GetObject, PutObject permissions on the bucket

- ✅ No Deny statements in the bucket policy affect this user

- ✅ Block Public Access settings are not preventing intended operations

- ✅ Region/endpoint matches the bucket's actual region

- ✅ Encryption requirements (SSE-KMS) are met if the bucket requires them

- ✅ ACL requirements are met for cross-account uploads

Getting Started

- Download RcloneView from rcloneview.com.

- Check Job History for the exact error message.

- Match the error to the fix above.

- Update credentials or IAM policies and re-run the job.

S3 permission errors are almost always configuration issues, not bugs. Methodical diagnosis eliminates them quickly.

Related Guides: