Download Files from URLs Directly to Cloud Storage with RcloneView

Why download a file to your local disk just to upload it again? Rclone's copyurl command streams files from any URL straight to your cloud storage.

There are many situations where you need to get a file from the web into cloud storage: public datasets, software releases, exported archives, media files, or backup downloads from a SaaS service. The traditional approach -- download locally, then upload -- wastes time, bandwidth, and disk space. Rclone's copyurl command skips the middleman by streaming the download directly to a cloud destination. RcloneView gives you access to this through its terminal and job interface.

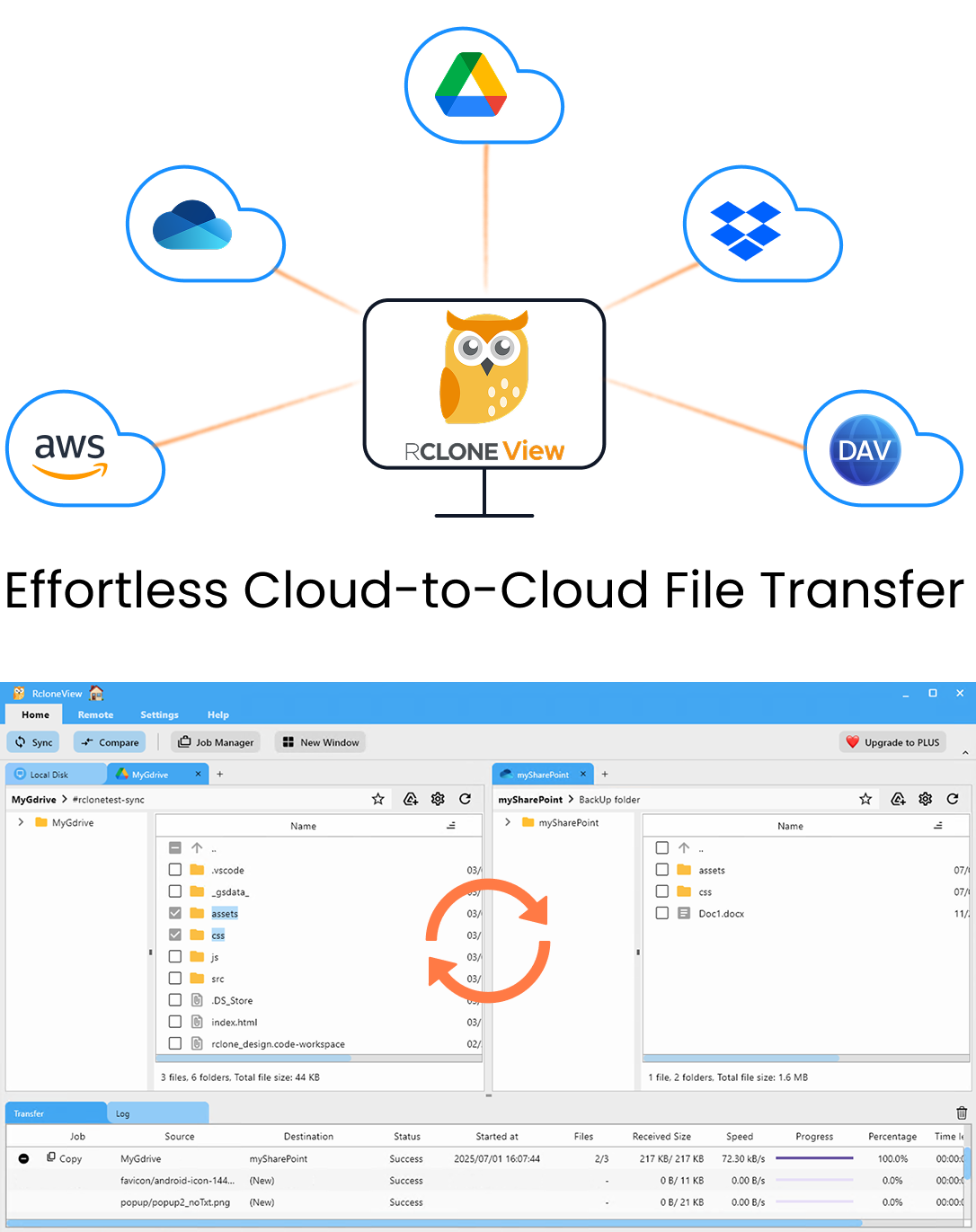

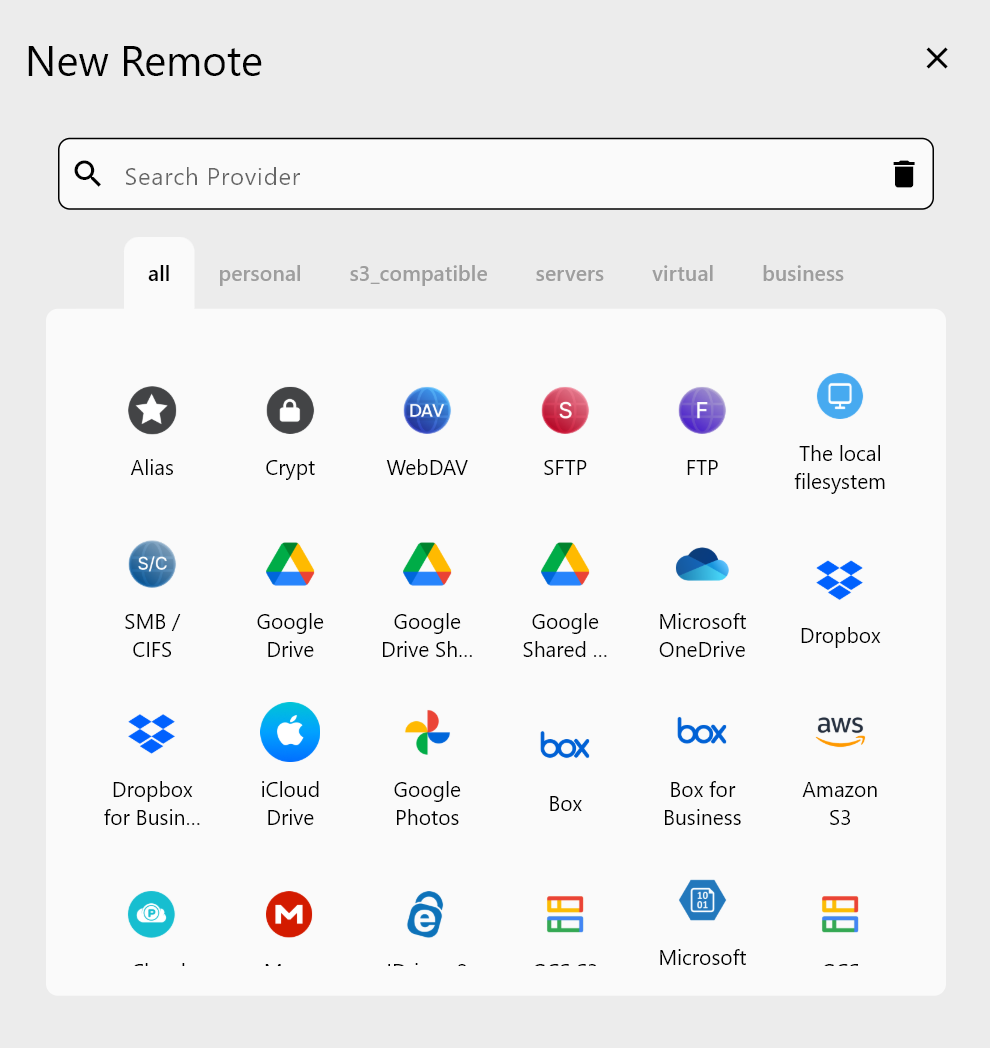

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

How Copyurl Works

The rclone copyurl command takes a source URL and a destination path on any configured remote:

rclone copyurl https://example.com/dataset.zip gdrive:/Downloads/dataset.zip

Rclone fetches the file from the URL and streams it directly to the destination. The file never touches your local disk (unless you add a --auto-filename flag, in which case the filename is derived from the URL).

Key characteristics:

- No local disk required -- the data streams through memory to the cloud provider's API.

- Works with any HTTP/HTTPS URL -- public download links, CDN URLs, pre-signed S3 URLs, direct file links.

- Supports any rclone destination -- Google Drive, OneDrive, S3, Backblaze B2, SFTP, or any other configured remote.

Basic Usage in RcloneView

Open the Terminal panel in RcloneView and run:

rclone copyurl "https://example.com/file.tar.gz" remote:/path/file.tar.gz

If you want rclone to automatically derive the filename from the URL:

rclone copyurl "https://releases.example.com/v2.1/app-linux-x64.tar.gz" remote:/downloads/ --auto-filename

This saves the file as app-linux-x64.tar.gz in the /downloads/ folder on your remote.

Use Case 1: Public Datasets

Researchers and data engineers frequently need to move large public datasets into cloud storage for processing. Instead of downloading a 50 GB dataset to a laptop and then uploading it:

rclone copyurl "https://data.gov/datasets/census-2025.csv.gz" s3-remote:data-lake/census/census-2025.csv.gz

The file goes directly from the data source to your S3 bucket. This is especially valuable when your local machine has limited disk space or a slower connection than your cloud provider.

Use Case 2: Software Archives and Releases

DevOps teams often need to store specific software versions in cloud storage for deployment or compliance:

rclone copyurl "https://github.com/rclone/rclone/releases/download/v1.68.0/rclone-v1.68.0-linux-amd64.zip" b2-remote:software-archive/rclone/rclone-v1.68.0-linux-amd64.zip

Maintaining a versioned archive of dependencies and tools in your own storage ensures availability even if upstream sources go offline.

Use Case 3: SaaS Export Downloads

Many SaaS platforms generate export files (database dumps, analytics reports, audit logs) as downloadable URLs. These URLs are often temporary. Stream them to permanent cloud storage immediately:

rclone copyurl "https://app.example.com/exports/db-backup-2026-04.sql.gz?token=abc123" wasabi:backups/saas/db-backup-2026-04.sql.gz

Use Case 4: Media and Content Files

Content teams and media producers can pull assets directly from CDNs or content delivery URLs to their cloud archive:

rclone copyurl "https://cdn.example.com/videos/webinar-recording.mp4" gdrive:/Media/Webinars/webinar-recording.mp4

This avoids filling up a local drive with large media files that are only needed in cloud storage.

Useful Flags for Copyurl

| Flag | Purpose |

|---|---|

--auto-filename | Derive the destination filename from the URL |

--no-clobber | Skip the download if a file with the same name already exists at the destination |

--no-check-certificate | Skip TLS certificate verification (use with caution) |

-P / --progress | Show real-time transfer progress |

--header "Authorization: Bearer TOKEN" | Add custom HTTP headers for authenticated downloads |

Example with progress and auto-filename:

rclone copyurl "https://releases.example.com/data.tar.gz" remote:/archive/ --auto-filename -P

Bulk URL Downloads

For downloading multiple files from different URLs, create a simple script or run multiple commands in sequence through RcloneView's terminal:

rclone copyurl "https://example.com/file1.zip" remote:/bulk/file1.zip

rclone copyurl "https://example.com/file2.zip" remote:/bulk/file2.zip

rclone copyurl "https://example.com/file3.zip" remote:/bulk/file3.zip

For larger batches, write the commands to a shell script and execute it from the terminal panel.

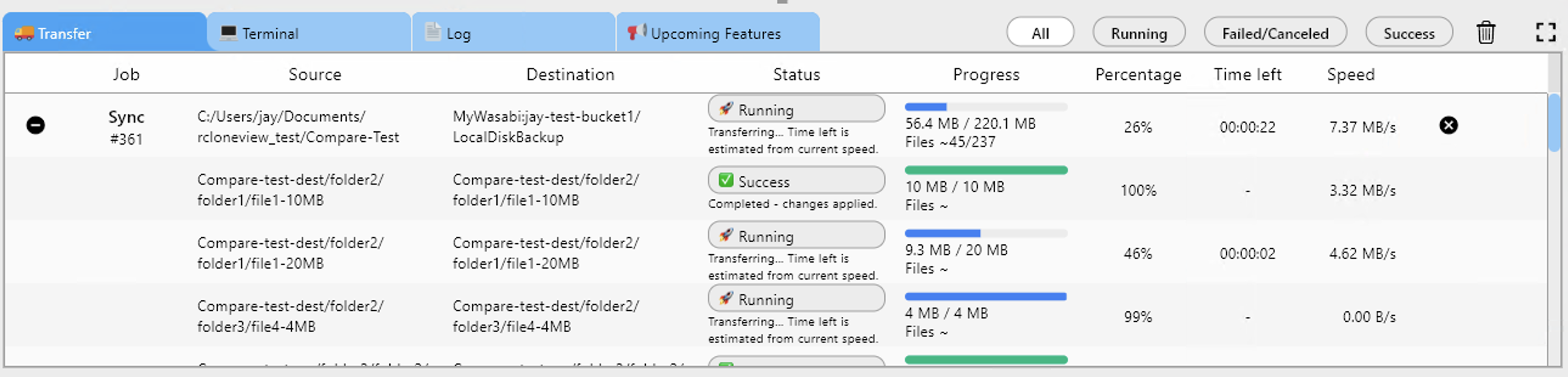

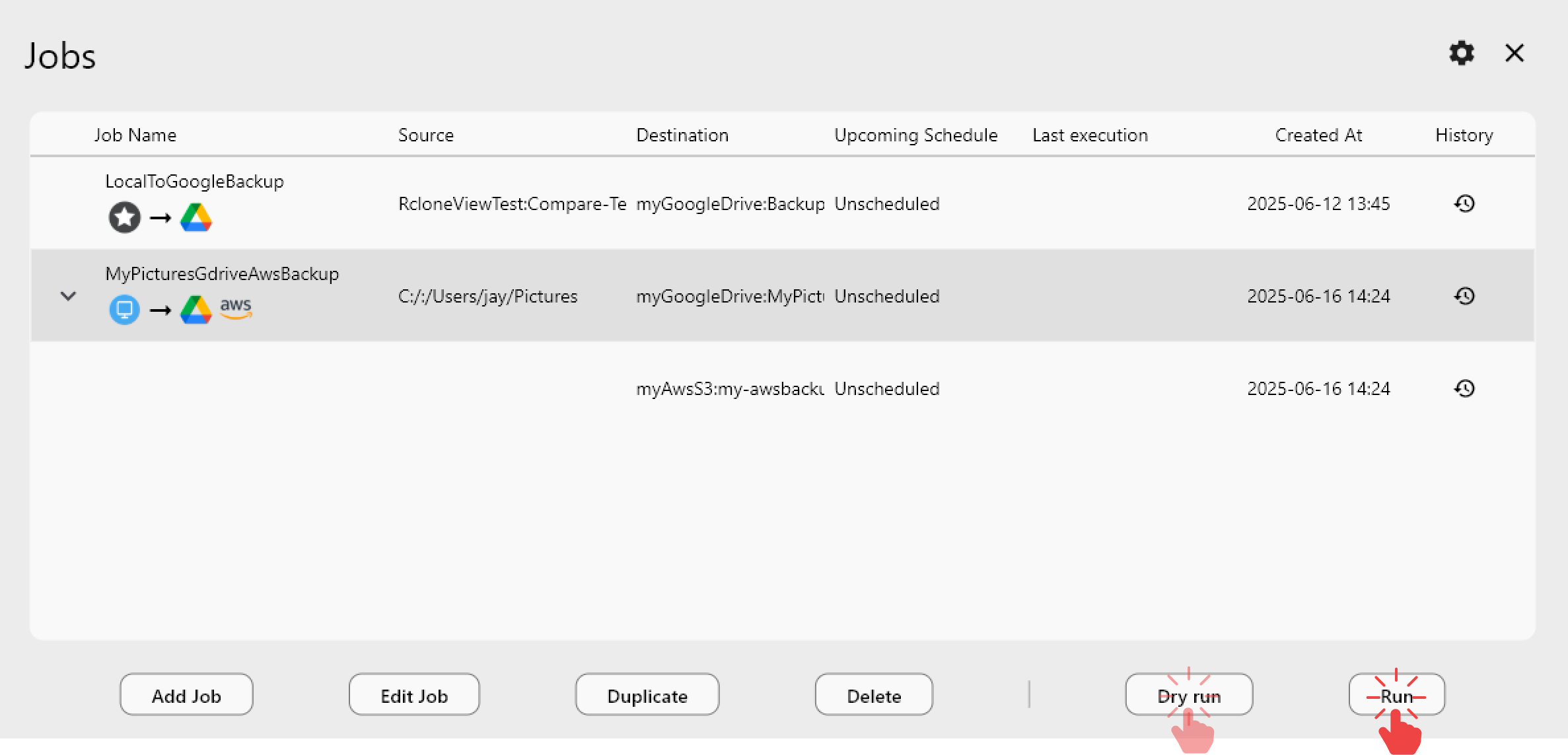

Creating Reusable Download Jobs

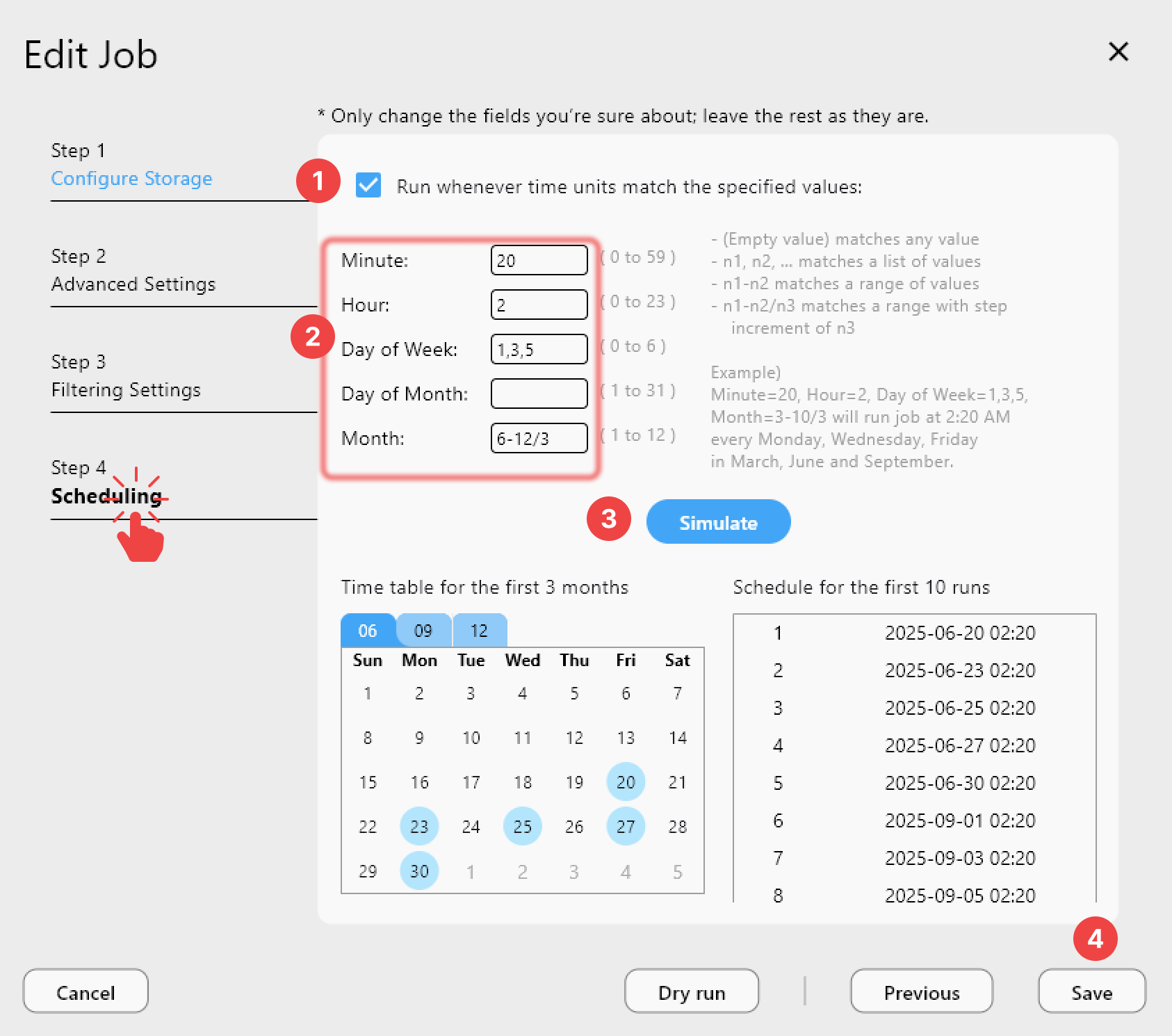

If you regularly download from the same source (e.g., nightly database exports), create a saved job in RcloneView:

- Open the Job Manager in RcloneView.

- Create a new job with the copyurl command.

- Add a schedule if the download needs to happen on a recurring basis.

- Review Job History to confirm each download completed successfully.

Limitations to Know

- Single file only --

copyurldownloads one URL at a time. It does not crawl pages or follow links. - No resume -- if the download is interrupted, it starts over. For very large files over unreliable connections, consider downloading locally first.

- URL must be directly downloadable -- it must point to a file, not a web page. Dynamic download links (JavaScript-triggered) will not work.

- Authentication -- for URLs behind login walls, you need to supply credentials via headers or use pre-authenticated/pre-signed URLs.

Best Practices

- Verify file integrity after download using

rclone checkorrclone hashsumif the source provides checksums. - Use

--no-clobberto avoid re-downloading files that already exist at the destination. - Monitor large transfers with the

-Pflag or through RcloneView's transfer monitoring. - Use pre-signed URLs for authenticated sources rather than embedding credentials in commands.

Related Guides:

- Cloud-to-Cloud Transfers and Syncing

- Recover Interrupted and Failed Transfers

- Use Custom Rclone Flags and Advanced Options