Why Are My Cloud Uploads So Slow? Speed Optimization Tips with RcloneView

You start a cloud upload expecting 30 minutes. Two hours later, it's at 40%. Before you blame your internet, the problem might be your tool — not your connection.

Slow cloud transfers are frustrating, but they're rarely caused by a single issue. It's usually a combination of default settings that aren't optimized for your situation, provider-specific throttling, and inefficient transfer methods. RcloneView gives you the controls to fix all of these.

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

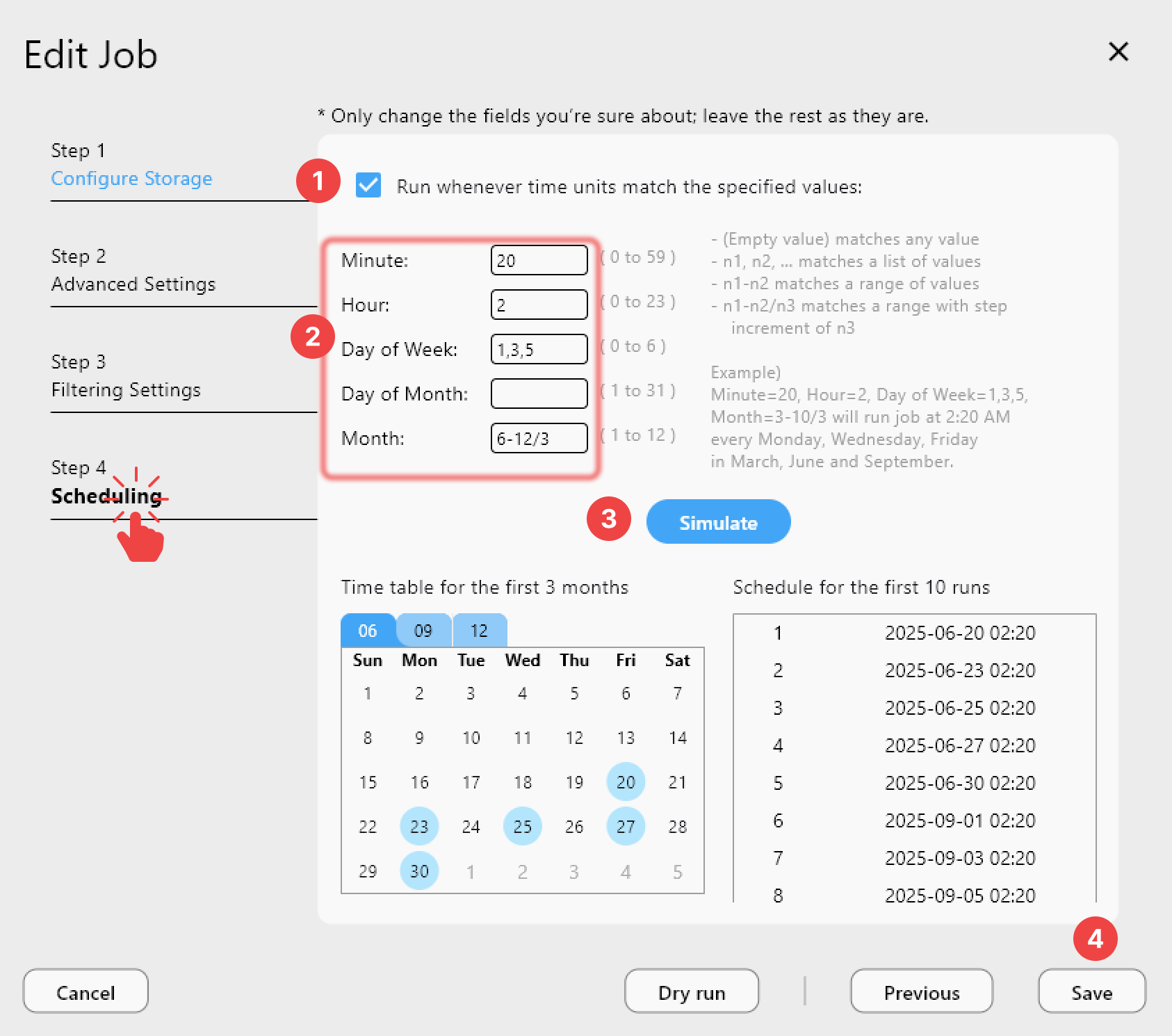

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Why Cloud Transfers Are Slow

1) Single-threaded transfers

Most cloud sync tools upload one file at a time. If you have 10,000 small files, each file requires a separate HTTP connection — setup, transfer, verification. The overhead per file can exceed the actual transfer time.

Fix: Increase parallel transfers. Rclone defaults to 4, but many connections can handle 8–16 or more.

2) Small chunk sizes

Large files are uploaded in chunks. If the chunk size is too small, each chunk requires its own HTTP request, adding overhead. If it's too large, a failed chunk means re-uploading more data.

Fix: For stable connections, increase chunk size. For Google Drive, try 64M or 128M. For S3, try 16M–64M.

3) Provider rate limits

Cloud providers throttle uploads to prevent abuse:

- Google Drive: ~750 GB/day upload limit.

- OneDrive: Throttling after sustained high throughput.

- Dropbox: Progressive throttling under heavy load.

- S3: 3,500 PUT requests/second per prefix.

Fix: Respect rate limits by adjusting transfer speeds. Use bandwidth limiting to stay under thresholds.

4) No server-side copy

When transferring between two clouds (e.g., S3 to S3), some tools download to your machine then re-upload. Rclone supports server-side copy for compatible providers — data moves directly between clouds without touching your machine.

Fix: RcloneView uses server-side copy automatically when available.

5) Checking every file

Before transferring, rclone checks if each file already exists at the destination. With large file counts, this checking phase can take longer than the actual transfer.

Fix: Use --no-check-dest for initial bulk transfers. Use normal checking for incremental syncs.

Speed Optimization Settings

Parallel transfers

Increase the number of simultaneous file transfers:

| Scenario | Recommended Setting |

|---|---|

| Default | 4 transfers |

| Fast internet (100+ Mbps) | 8–16 transfers |

| Many small files | 16–32 transfers |

| Large files only | 4–8 transfers |

More parallel transfers help with many small files. For a few large files, chunk size matters more.

Chunk size by provider

| Provider | Default Chunk | Recommended |

|---|---|---|

| Google Drive | 8 MB | 64–128 MB |

| OneDrive | 10 MB | 64 MB |

| S3 | 5 MB | 16–64 MB |

| Dropbox | 48 MB | 48–150 MB |

Larger chunks mean fewer HTTP requests and less overhead.

Buffer size

Increase the in-memory buffer for faster reading:

- Default: 16 MB

- Recommended: 64–256 MB (if you have the RAM)

This helps when reading from slow sources (NAS, spinning disks).

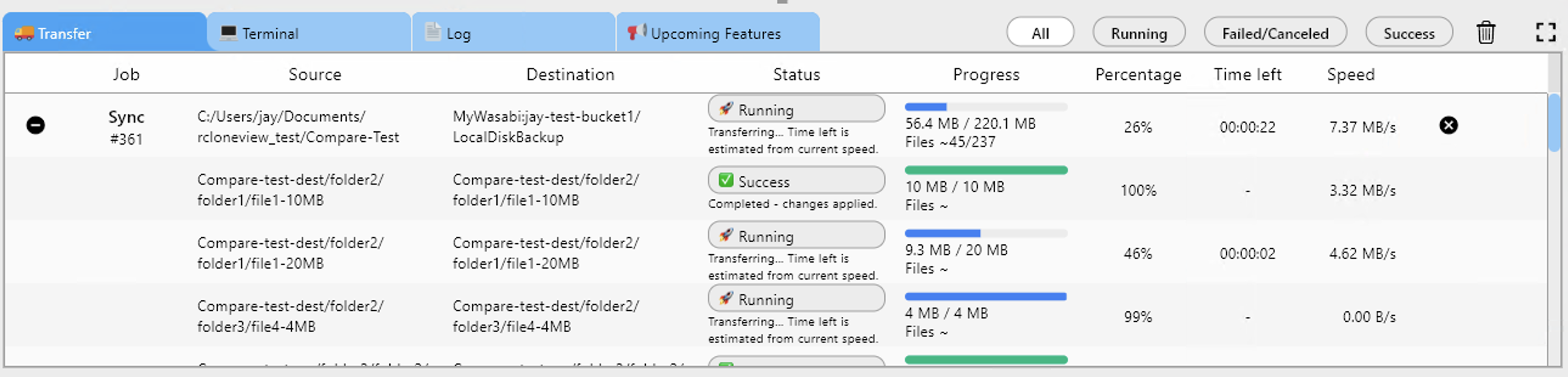

Monitor and Measure

Track transfer speed in real time to see the effect of your changes:

What to watch

- Transfer speed — Should match a reasonable percentage of your internet upload speed.

- Active transfers — Should match your parallel transfer setting.

- Errors — Rate limit errors (429, 403) mean you need to slow down.

- Checks vs transfers — If checking takes too long, consider skipping for initial uploads.

Provider-Specific Tips

Google Drive

- Set chunk size to 64M or higher.

- Keep parallel transfers at 4–8 (Google throttles aggressively above this).

- If you hit the 750 GB daily limit, schedule transfers across multiple days.

OneDrive / SharePoint

- Use 4–8 parallel transfers.

- Chunk size 64 MB works well.

- OneDrive throttles based on total volume — spread large transfers over time.

AWS S3

- S3 handles high parallelism well — try 16–32 transfers.

- Use multipart upload for files over 100 MB.

- Choose an S3 region close to your location for lower latency.

Backblaze B2

- Supports high parallelism — 16+ transfers work well.

- Chunk size doesn't apply (B2 uses its own large file API).

- No daily transfer limits.

Batch Jobs for Optimized Workflows

With v1.3 Batch Jobs, chain an optimized transfer sequence:

- Bulk transfer with aggressive settings.

- Verification comparison.

- Notification when complete.

Quick Checklist

- Increase parallel transfers — especially for many small files.

- Increase chunk size — especially for large files.

- Check your internet speed —

speedtest-clito baseline your connection. - Monitor rate limits — watch for 429/403 errors in transfer logs.

- Use server-side copy — when transferring between compatible clouds.

- Schedule large transfers — off-hours to avoid impacting your network.

Getting Started

- Download RcloneView from rcloneview.com.

- Tune your transfer settings in the job configuration.

- Monitor speed in real time.

- Adjust and iterate — small changes can dramatically improve throughput.

The default settings work for most situations. But when you're moving terabytes, optimization pays off quickly.

Related Guides: