Verify Cloud File Integrity with RcloneView's Check and Compare Features

Copying files to the cloud is only half the job. Verifying that every byte arrived intact is what separates a reliable workflow from a hopeful one.

Moving terabytes across providers, running nightly backups, or archiving important datasets all share a common risk: silent corruption. A file can appear present in the destination yet differ from the source due to interrupted transfers, provider-side bugs, or plain bit rot over time. Rclone provides a dedicated check command that compares source and destination file by file, and RcloneView makes that process visual and accessible. This guide explains when and how to verify your cloud files.

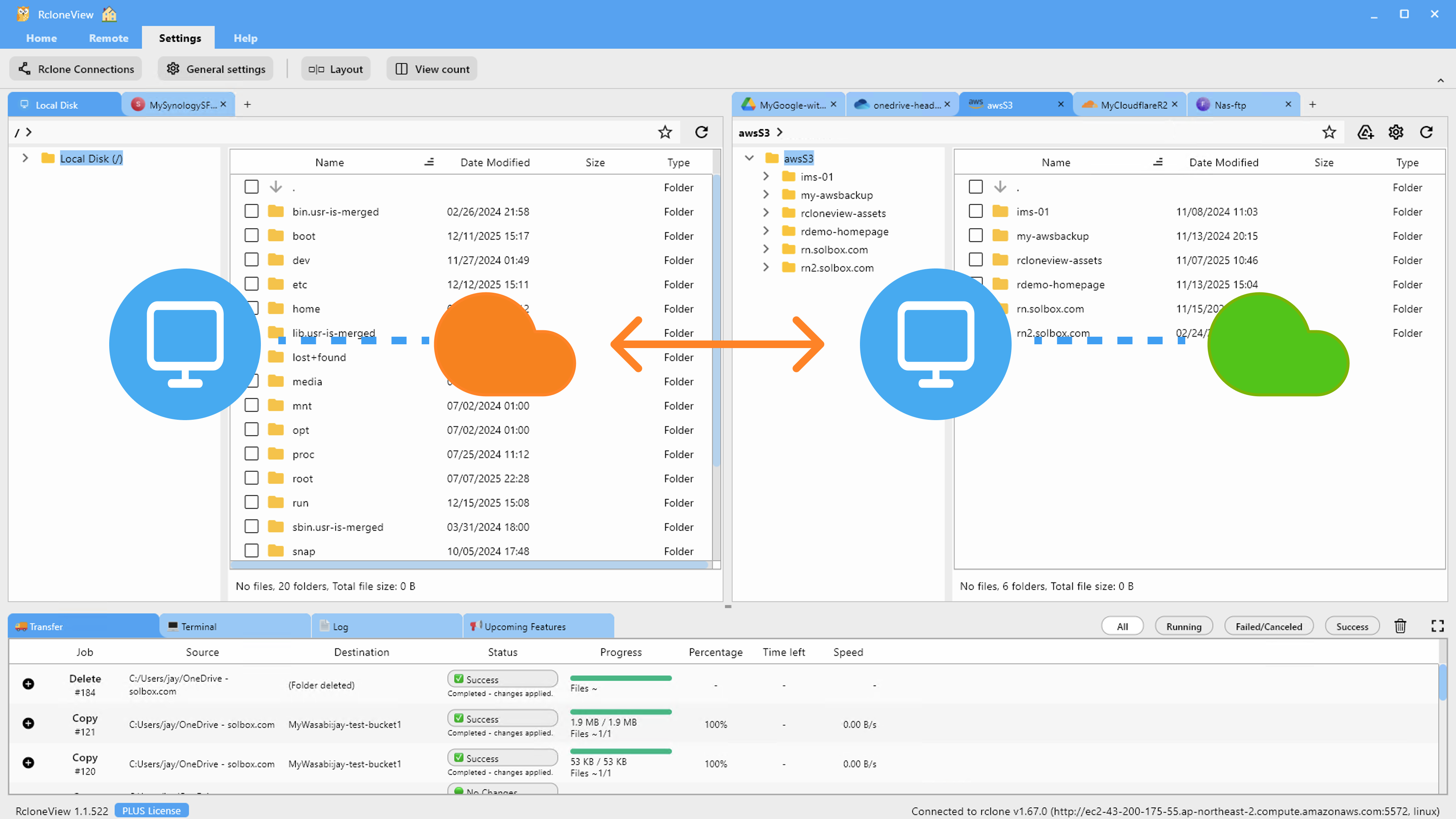

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Why File Integrity Verification Matters

Cloud providers replicate data internally, but no system is immune to errors. Here are the most common scenarios where verification catches real problems:

- Interrupted transfers -- a network drop during a large copy can leave partial files on the destination that look complete by name alone.

- Bit rot -- storage media can degrade over months or years, flipping bits in rarely accessed files.

- Provider bugs -- occasional API errors can result in zero-byte uploads or truncated writes that pass without raising an error.

- Migration validation -- after moving hundreds of thousands of files between providers, you need proof that nothing was lost or altered.

Without a verification step, these issues go undetected until you actually need the file.

How Rclone Check Works

The rclone check command compares a source and destination path and reports files that differ. Depending on the providers involved, it uses one of these methods:

| Method | How It Works | When Used |

|---|---|---|

| Hash check | Compares checksums (MD5, SHA1, etc.) reported by both providers | Both providers support a common hash |

| Size check | Compares file sizes only | No common hash available |

| Download check | Downloads both files and compares byte by byte | Forced with --download flag |

Hash-based checking is the fastest and most reliable when both providers support it. Google Drive, OneDrive, S3-compatible providers, and Backblaze B2 all report file hashes, though not always the same type.

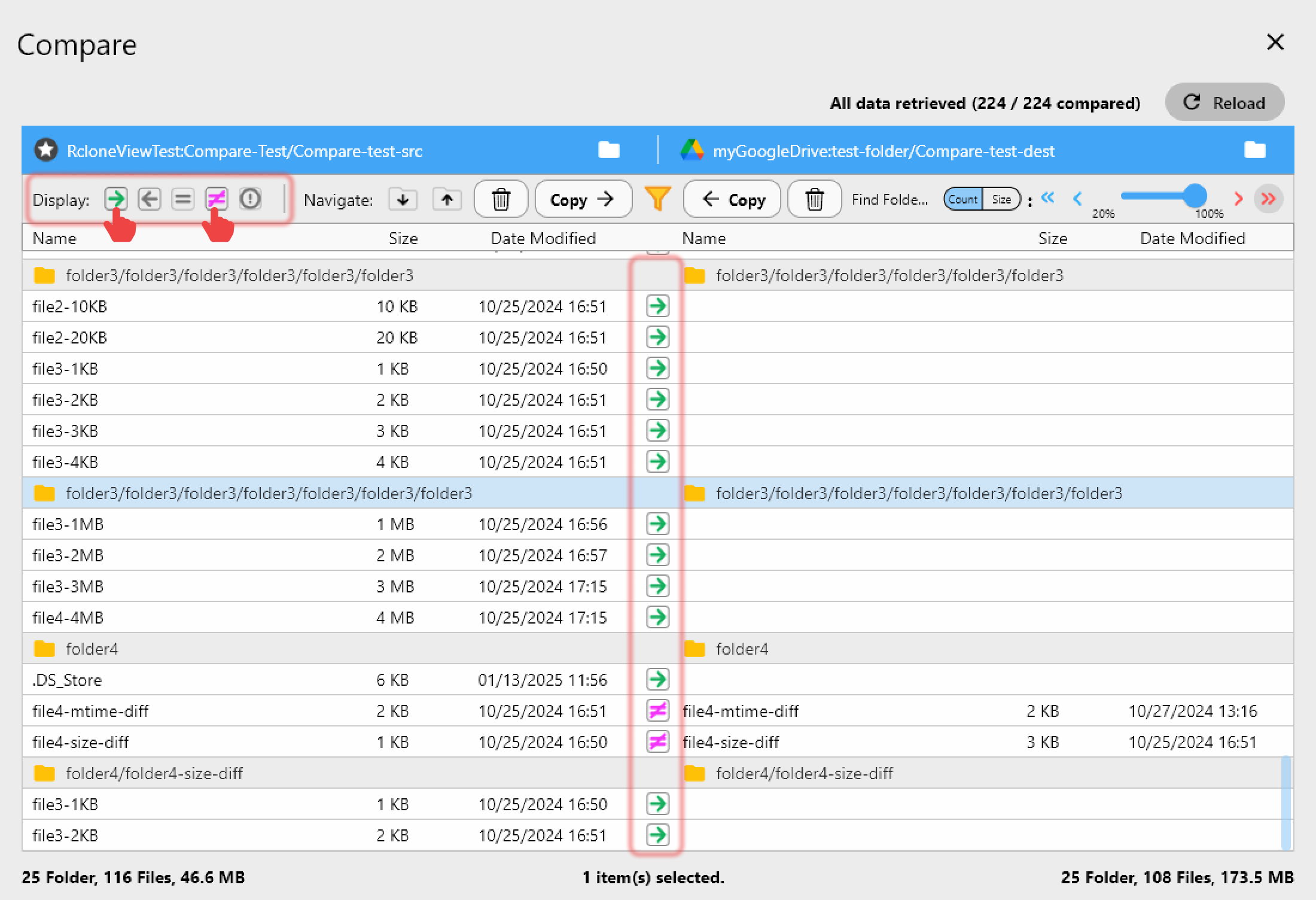

Using Compare in RcloneView

RcloneView's built-in Compare feature gives you a visual side-by-side view of source and destination folders:

- Open the Explorer pane and select your source remote on one side and destination on the other.

- Navigate to the folders you want to compare.

- Click Compare to run the analysis.

- Review the results -- files are color-coded by status: matching, source-only, destination-only, or different.

This visual approach is ideal for spot-checking specific folders or reviewing post-migration results without memorizing command-line output.

Running Rclone Check via the Terminal

For a full integrity scan across an entire remote, open the Terminal in RcloneView and run:

rclone check source:path dest:path

Useful flags to know:

| Flag | Purpose |

|---|---|

--size-only | Compare by size only, skip hash check |

--download | Force byte-by-byte comparison by downloading both copies |

--one-way | Only check that source files exist in destination (not vice versa) |

--combined report.txt | Write a combined report of matches and mismatches to a file |

--missing-on-src missing.txt | Log files present in destination but missing from source |

--missing-on-dst missing.txt | Log files present in source but missing from destination |

--error errors.txt | Log files that failed the check |

Example for a thorough post-migration check:

rclone check gdrive:/Archive s3-backup:archive-bucket --combined /tmp/check-report.txt

Post-Migration Verification Workflow

After migrating data between providers, follow this workflow to confirm success:

- Run a one-way check from source to destination to confirm all source files arrived:

rclone check source:path dest:path --one-way - Review mismatches -- any reported differences indicate files that need re-copying.

- Re-transfer failed files using RcloneView's copy or sync with

--ignore-existingremoved. - Re-run the check to confirm all differences are resolved.

- Save the report for audit purposes using the

--combinedflag.

Detecting Bit Rot Over Time

For long-term archives, schedule periodic integrity checks:

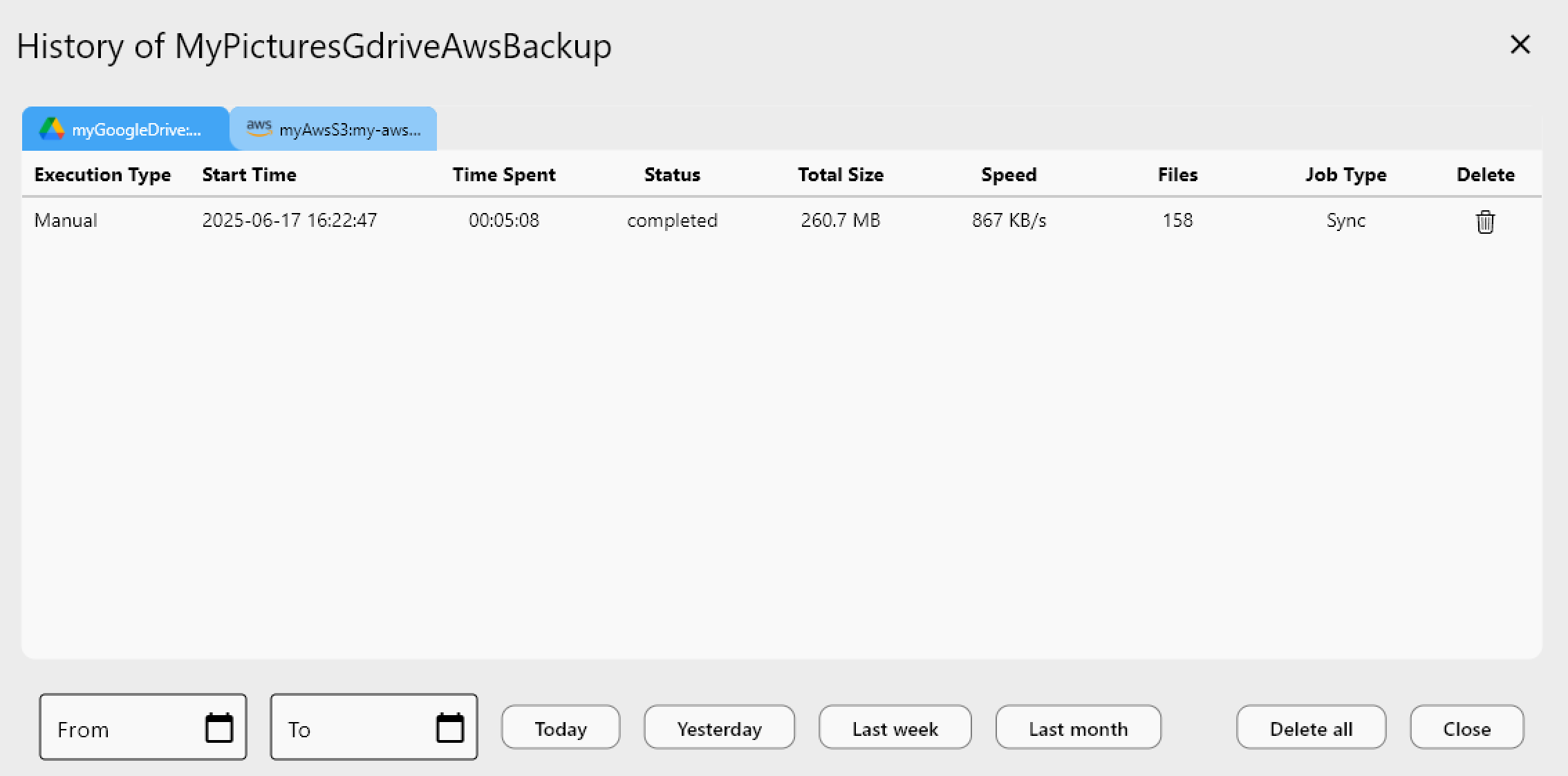

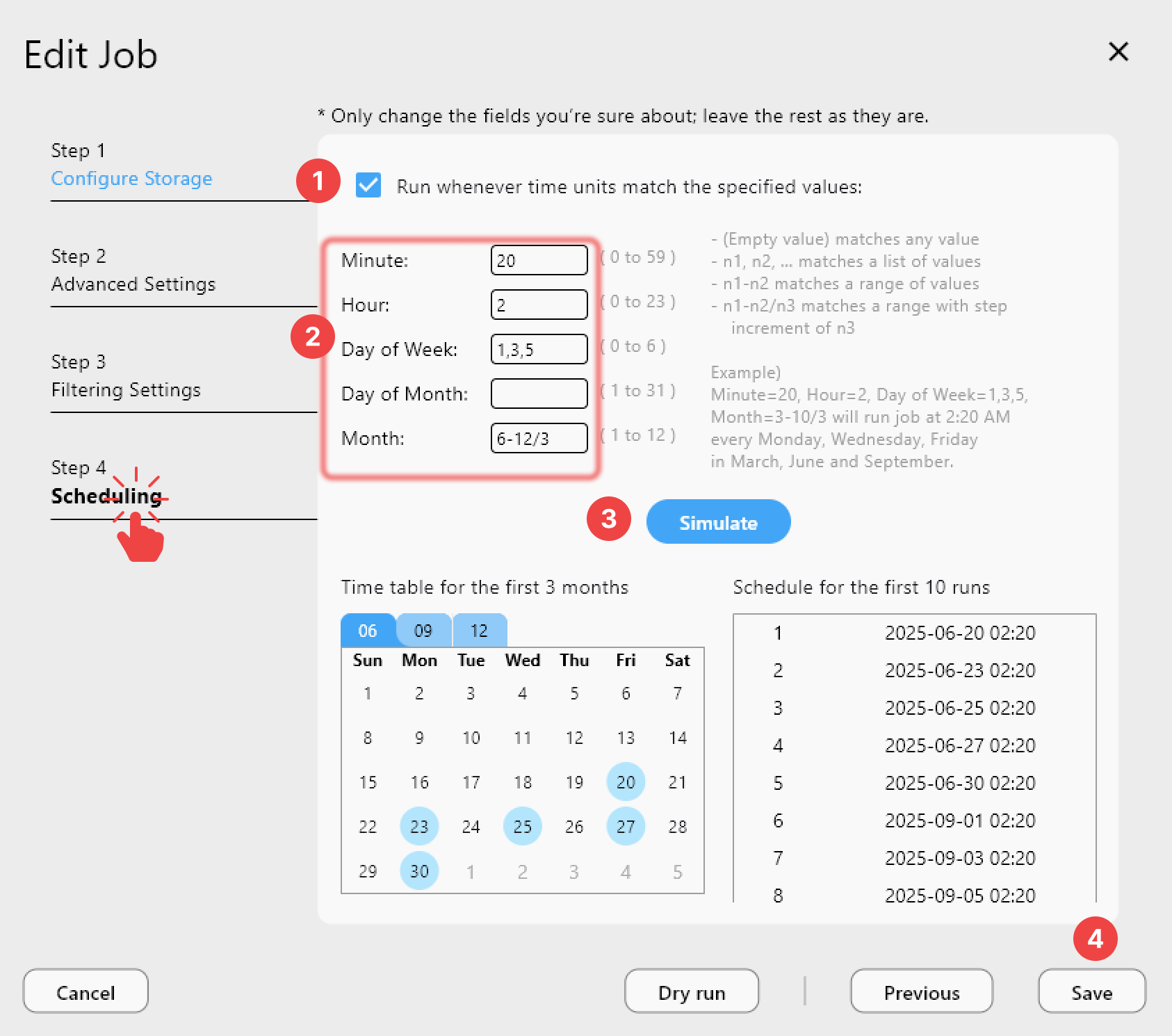

- Create a Job in RcloneView that runs

rclone checkagainst your archive. - Schedule it weekly or monthly using the Job Scheduler.

- Review the job history after each run to catch any newly corrupted files.

This is especially important for cold storage tiers (S3 Glacier, Backblaze B2 archives) where files are written once and read rarely.

Checksum Compatibility Between Providers

Not every provider supports the same hash algorithm. Here is a quick reference:

| Provider | MD5 | SHA1 | Other |

|---|---|---|---|

| Google Drive | Yes | No | Quickxor available |

| OneDrive | No | No | QuickXorHash |

| Amazon S3 | Yes (ETag for single-part) | No | -- |

| Backblaze B2 | No | Yes | SHA1 native |

| Dropbox | No | No | Dropbox content hash |

| SFTP/Local | Yes | Yes | Multiple |

When two providers share no common hash, rclone falls back to size-only comparison. Use --download for byte-level certainty in those cases.

Best Practices

- Always verify after large migrations -- a successful copy command does not guarantee every file is intact.

- Use

--combinedreports to create an auditable record of what matched and what did not. - Schedule periodic checks for archival data that sits untouched for months.

- Prefer hash checks over size-only when possible -- same-size corruption is rare but real.

- Run dry-run syncs after a check to automatically fix any mismatches.

Related Guides:

- Cloud-to-Cloud Transfers and Syncing

- Incremental Backup from Google Drive to Amazon S3

- Recover Interrupted and Failed Transfers