Cloud Storage for Pharmaceutical and Life Sciences Teams with RcloneView

Pharma and biotech teams handle some of the most sensitive and voluminous data in any industry. Managing clinical trial records, genomics datasets, and lab results across multiple cloud providers requires tools that meet strict regulatory standards while handling massive file transfers efficiently.

Pharmaceutical companies, biotech startups, and life sciences research labs generate enormous amounts of data — from high-throughput sequencing runs producing terabytes of FASTQ files to clinical trial case report forms that must be retained for decades. This data often spans multiple cloud providers: AWS S3 for compute-intensive genomics pipelines, Google Cloud for AI/ML workloads, Azure for enterprise applications, and provider-specific solutions for archival storage. Managing it all while maintaining compliance with FDA regulations, GxP guidelines, and data integrity principles is a significant challenge. RcloneView provides a unified interface for transferring, syncing, and organizing this data across any combination of cloud and local storage.

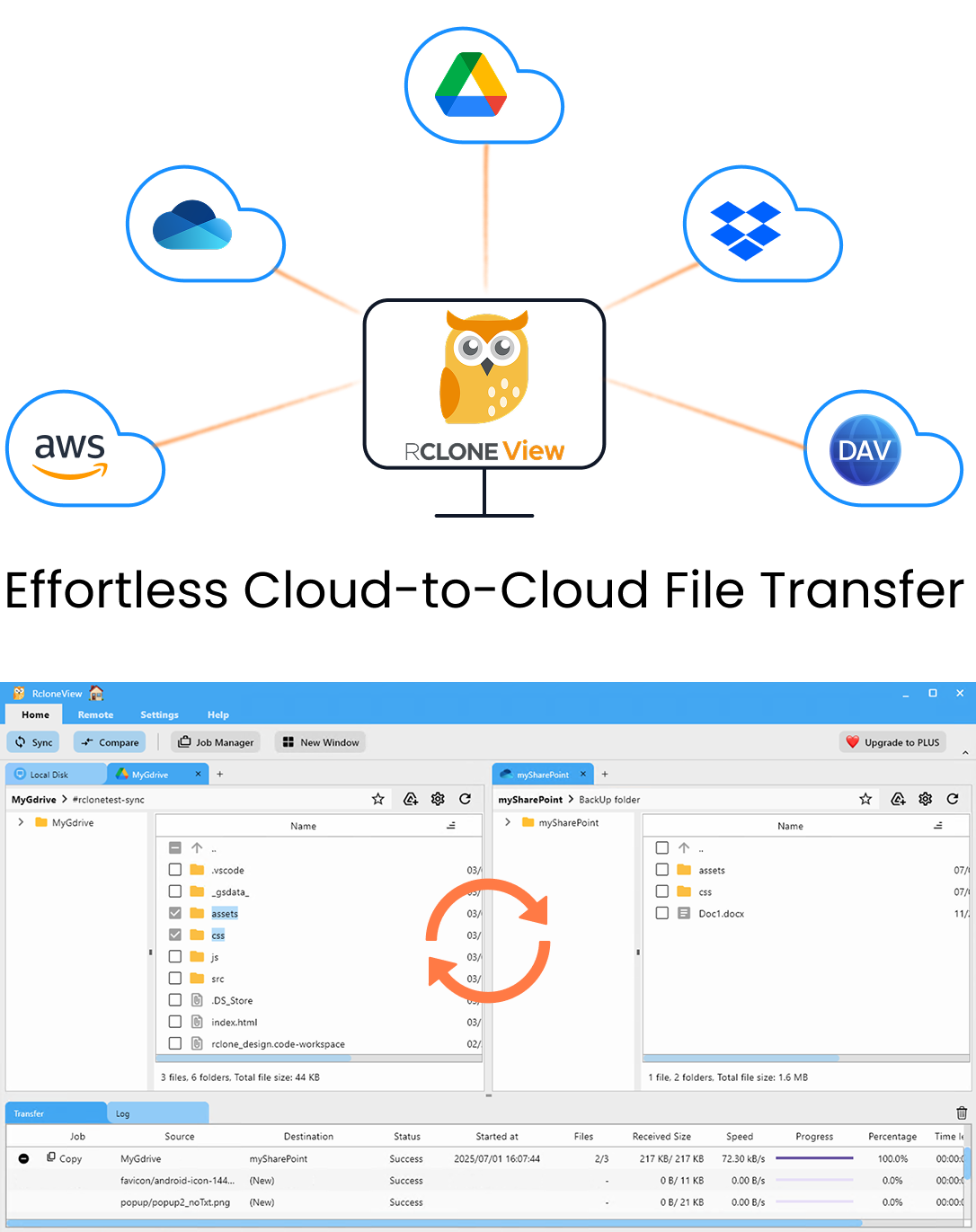

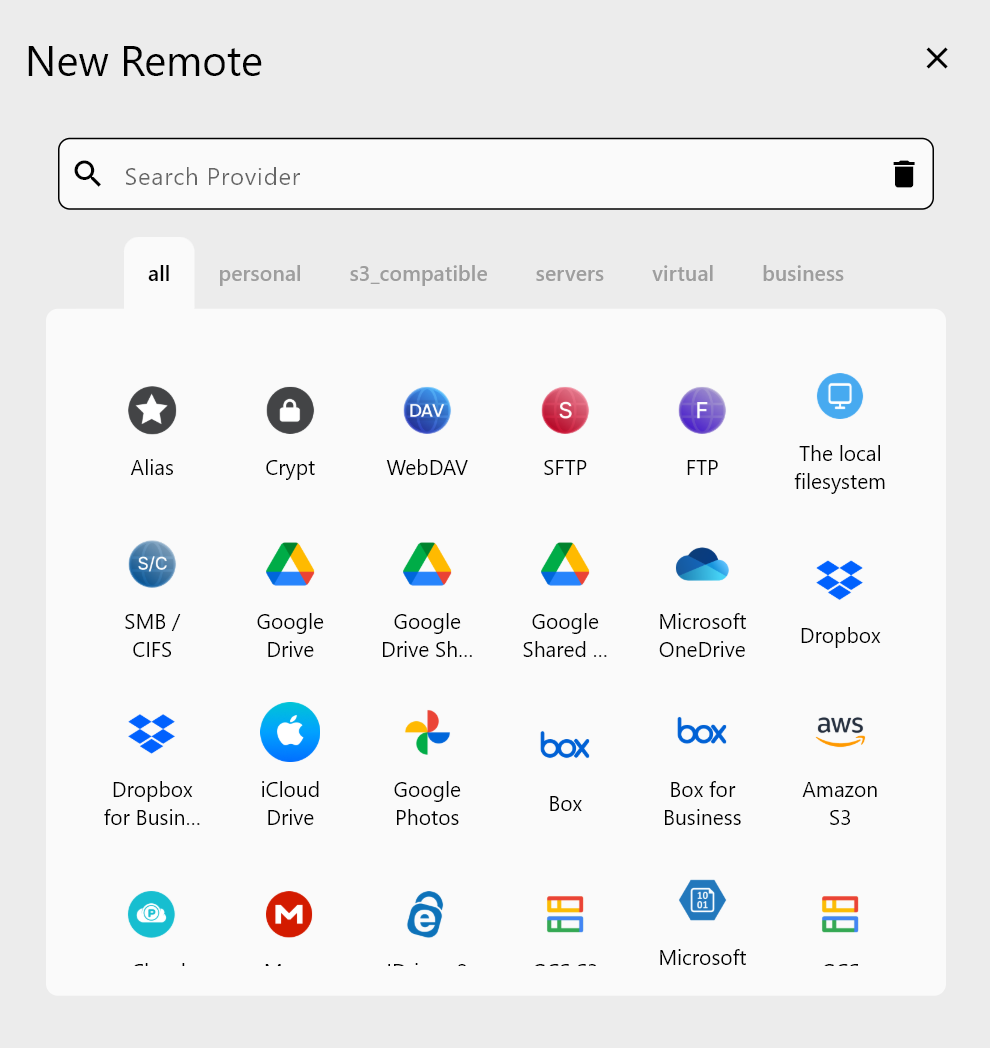

Manage & Sync All Clouds in One Place

RcloneView is a cross-platform GUI for rclone. Compare folders, transfer or sync files, and automate multi-cloud workflows with a clean, visual interface.

- One-click jobs: Copy · Sync · Compare

- Schedulers & history for reliable automation

- Works with Google Drive, OneDrive, Dropbox, S3, WebDAV, SFTP and more

Free core features. Plus automations available.

Regulatory Landscape: FDA 21 CFR Part 11 and GxP

Any cloud storage system used in a regulated pharmaceutical environment must be evaluated against FDA 21 CFR Part 11, which governs electronic records and electronic signatures. The regulation requires:

- Audit trails — systems must record who created, modified, or deleted a record, and when. Changes must not obscure previously recorded information.

- Access controls — only authorized individuals should be able to access, create, modify, or delete records. Systems must use unique user IDs and passwords.

- Data integrity — records must be accurate, complete, and reliable throughout their lifecycle. The ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, Available) apply.

- Validation — systems must be validated to ensure they perform as intended. This includes installation qualification (IQ), operational qualification (OQ), and performance qualification (PQ).

- Electronic signatures — when electronic signatures are used, they must be linked to their respective records and include the signer's name, date/time, and the meaning of the signature.

GxP (Good Practice) guidelines — including GLP (Good Laboratory Practice), GMP (Good Manufacturing Practice), and GCP (Good Clinical Practice) — add further requirements around documentation, traceability, and quality management.

RcloneView itself is a file management tool, not a validated electronic records system. However, it plays a critical role in the data management layer by ensuring files are transferred accurately, verified with checksums, and organized consistently across storage locations. When used as part of a validated workflow, RcloneView helps teams maintain data integrity during transfers and migrations.

Data Integrity During Transfers

Data integrity is the cornerstone of pharmaceutical data management. A single corrupted file in a clinical trial dataset can invalidate results and trigger regulatory action. RcloneView supports several mechanisms to ensure files arrive at their destination exactly as they left the source.

Checksum Verification

Rclone computes and compares checksums (MD5, SHA-1, or provider-specific hashes) during and after transfers. Running a sync with the --checksum flag ensures that every file is verified byte-for-byte:

rclone sync source: dest: --checksum

In RcloneView, enable checksum verification in the sync job configuration. After the transfer completes, the job log shows the verification status for each file.

Transfer Logging

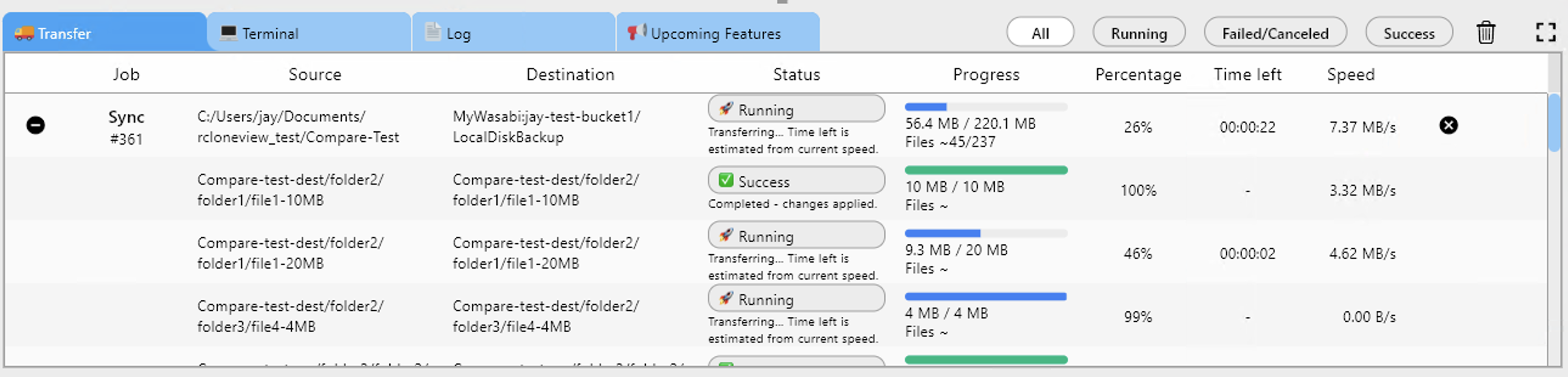

Every transfer operation in RcloneView is logged with timestamps, file paths, sizes, and transfer status. These logs serve as part of the audit trail for data movements. Export logs from the Job History view for inclusion in your quality documentation.

Dry-Run Validation

Before executing a production transfer, use the dry-run feature to preview exactly which files will be copied, updated, or deleted. This serves as a pre-execution check that can be reviewed and approved before any data moves.

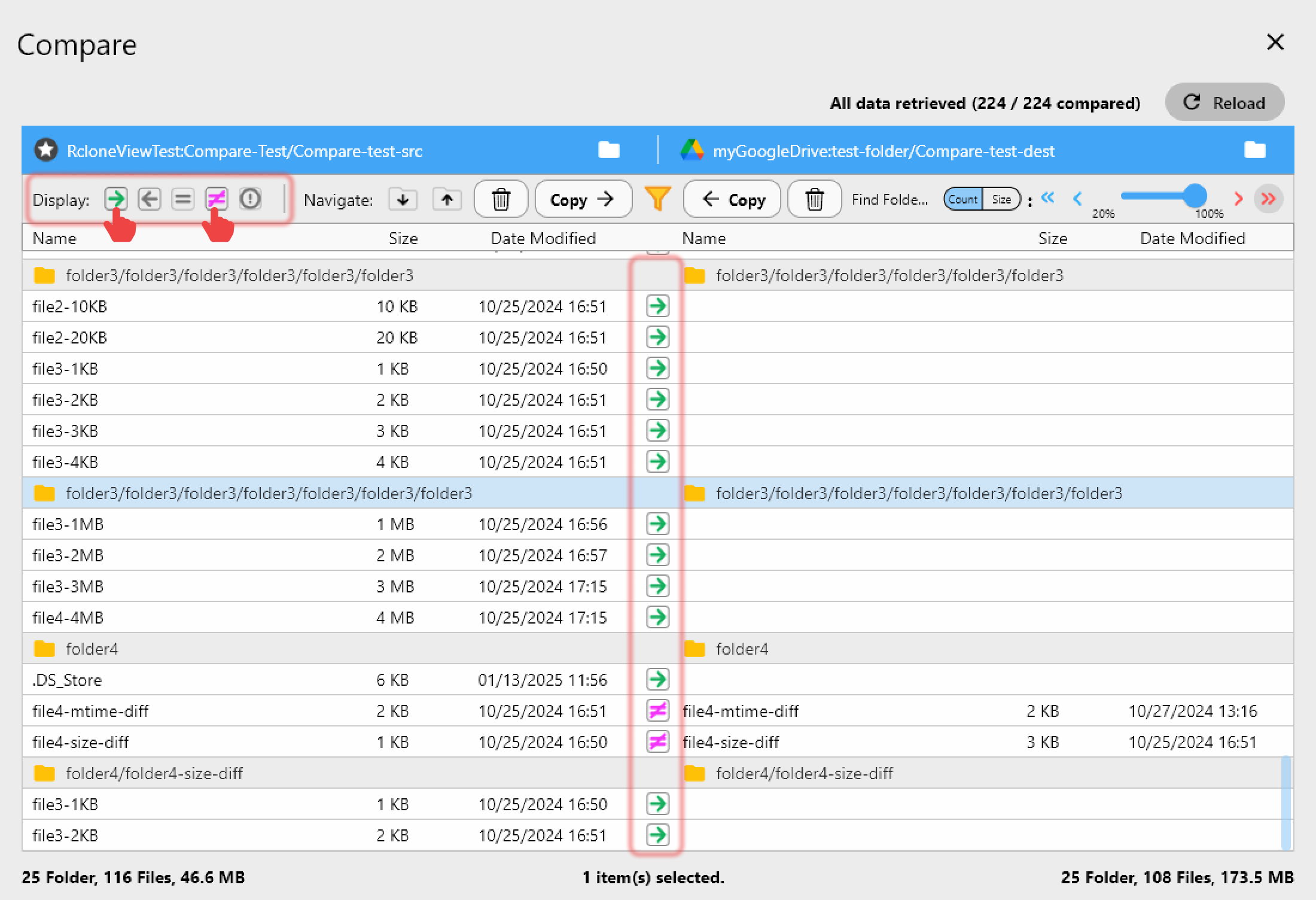

Compare Before and After

RcloneView's folder comparison feature lets you compare source and destination directories side by side. Use this after a transfer to confirm that all files are present and match. The comparison shows differences in file count, size, and modification time — a quick visual check that the transfer is complete.

Managing Genomics and Imaging Datasets

Life sciences teams routinely work with files that are orders of magnitude larger than typical business documents:

- Whole genome sequencing produces 100-300 GB of raw data per sample.

- Cryo-EM imaging sessions generate terabytes of micrograph data.

- High-content screening can produce hundreds of gigabytes of cell images per experiment.

- Mass spectrometry raw data files range from hundreds of megabytes to tens of gigabytes.

These datasets must be moved between instruments (often on-premises), analysis clusters (often cloud-based), and archival storage (often a different cloud provider or cold storage tier).

Optimizing Large Transfers

RcloneView supports several strategies for handling massive datasets efficiently:

- Multi-threaded transfers — use

--transfersto run multiple file transfers in parallel and--multi-thread-streamsto split individual large files across multiple connections. - Bandwidth scheduling — limit transfer speeds during business hours to avoid saturating the network, then run at full speed overnight. Use

--bwlimit "08:00,10M 18:00,off"to set time-based limits. - Resumable transfers — if a transfer is interrupted (network drop, system restart), rclone resumes from where it left off rather than starting over.

- Chunked uploads — large files are automatically split into chunks for upload, with configurable chunk sizes to match your network conditions.

In RcloneView, configure these options per job. A genomics data pipeline might use aggressive parallelism (--transfers 16 --multi-thread-streams 8), while a clinical document sync might use conservative settings to prioritize reliability.

Encryption for Data at Rest and in Transit

Pharmaceutical data often includes personally identifiable information (PII) from clinical trial participants, proprietary research data, and trade secrets. Encryption is not optional — it is a regulatory and business requirement.

Encryption in Transit

All cloud provider connections in rclone use TLS/HTTPS by default. Data moving between your system and the cloud is encrypted during transit without any additional configuration.

Encryption at Rest with Crypt Remotes

Rclone's crypt remote adds client-side encryption before data leaves your machine. Files are encrypted with AES-256 and file names can be optionally encrypted or obfuscated. The encryption keys never leave your control — the cloud provider cannot read your data.

To set up an encrypted remote in RcloneView:

- Create a standard remote pointing to your cloud provider (e.g., an S3 bucket).

- Create a crypt remote that wraps the standard remote.

- All files transferred through the crypt remote are automatically encrypted before upload and decrypted on download.

This is especially important for clinical trial data stored on third-party cloud infrastructure, where regulatory requirements may mandate that the cloud provider cannot access the data content.

Key Management

Encryption keys must be managed carefully:

- Store the rclone crypt password and salt in a secure secrets manager (e.g., AWS Secrets Manager, HashiCorp Vault).

- Document the key recovery procedure as part of your disaster recovery plan.

- Never store encryption keys in the same location as the encrypted data.

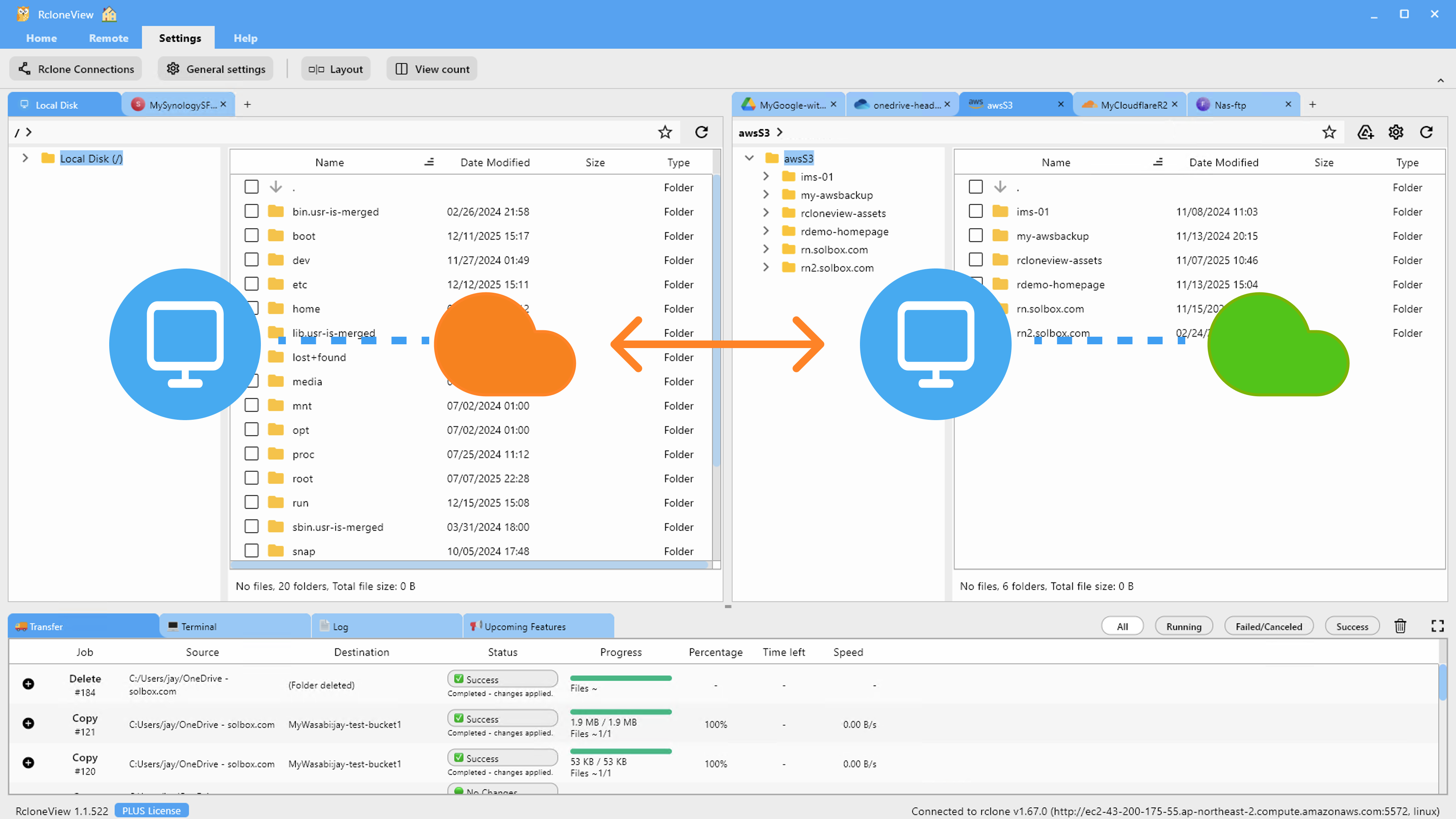

Multi-Cloud Architecture for Pharma

Pharmaceutical organizations commonly use multiple cloud providers for different purposes:

| Use Case | Typical Provider | Reason |

|---|---|---|

| Genomics pipelines | AWS S3 | EC2 compute, Batch, established bioinformatics tooling |

| AI/ML drug discovery | Google Cloud | Vertex AI, TPU access, BigQuery for structured data |

| Enterprise apps (ERP, QMS) | Azure | Microsoft 365 integration, Active Directory |

| Long-term archival | Wasabi / Backblaze B2 / S3 Glacier | Low-cost, immutable storage for retention requirements |

| Collaboration (docs, reports) | Google Drive / OneDrive | Familiar interfaces for non-technical staff |

RcloneView connects to all of these from a single interface. Set up each provider as a remote, then use the two-pane explorer to browse, compare, and transfer between any combination. A researcher can drag genomics output from an S3 analysis bucket to a Wasabi archival bucket, then copy the summary report to a Google Drive shared folder — all within the same RcloneView session.

Validated Environments and Qualification

Using RcloneView in a GxP-validated environment requires treating it like any other computerized system:

Installation Qualification (IQ)

Document the installation of RcloneView and rclone, including:

- Software version numbers.

- Operating system and hardware specifications.

- FUSE driver versions (for mount functionality).

- Network configuration and proxy settings.

Operational Qualification (OQ)

Test that RcloneView performs as expected:

- Transfer a known set of files and verify checksums match.

- Confirm that sync operations correctly detect and resolve differences.

- Test error handling — interrupt a transfer and verify it resumes correctly.

- Verify that job logs capture all required information.

Performance Qualification (PQ)

Validate that the system performs reliably under production conditions:

- Run transfers with production-scale datasets.

- Monitor performance over an extended period.

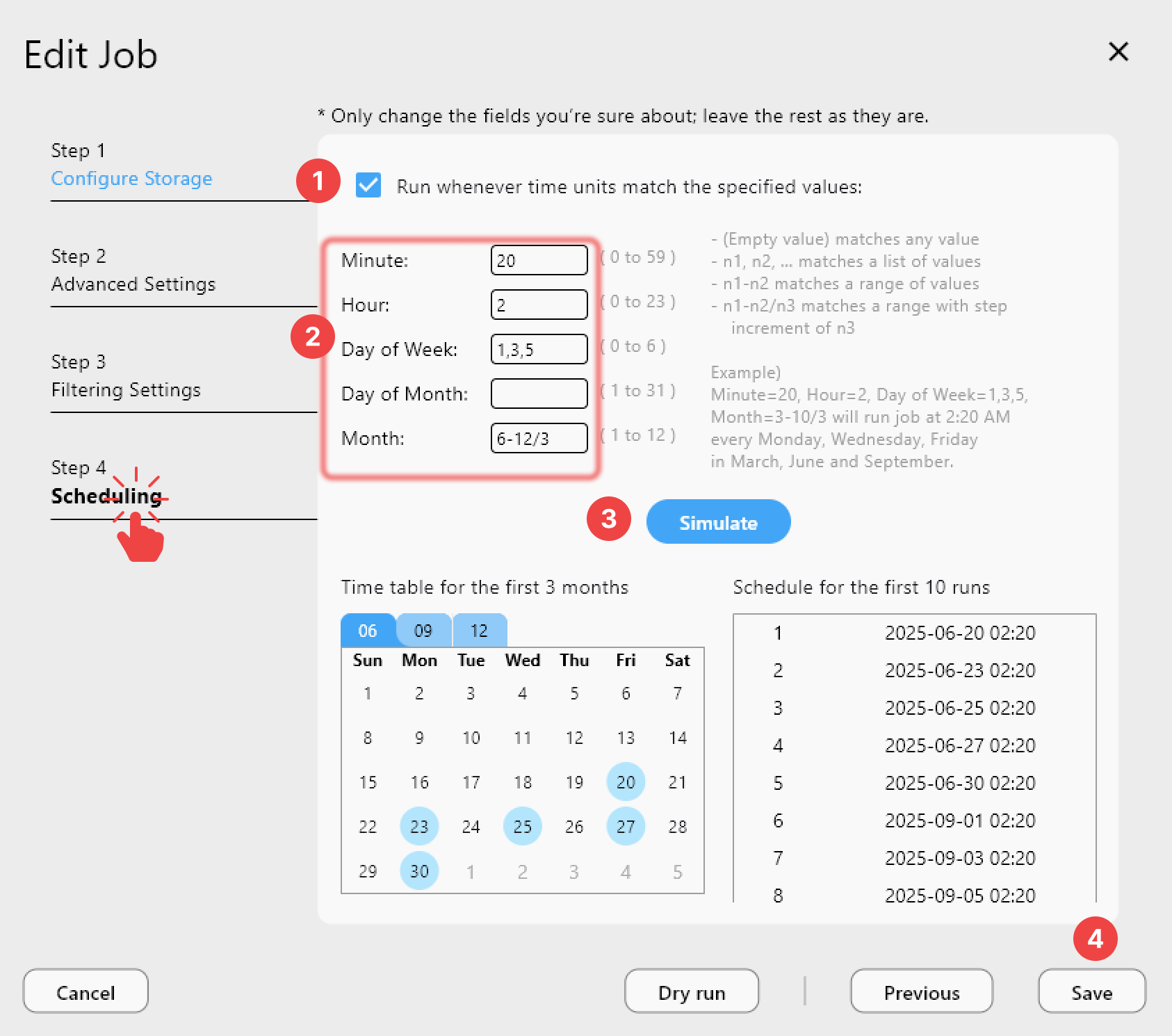

- Verify that scheduled jobs execute as configured.

- Confirm that all logs and audit trails are complete and accurate.

Document all test results and retain them as part of your validation package. RcloneView's Job History and transfer logs provide much of the evidence needed for qualification.

Automating Compliant Workflows

Manual file management introduces risk — someone might copy to the wrong location, forget to verify checksums, or skip a step. Automation reduces this risk.

Scheduled Sync Jobs

Create sync jobs in RcloneView that run on a defined schedule:

- Daily instrument data backup — sync new sequencing data from the instrument server to S3 every night at 2 AM.

- Weekly archival — move data older than 90 days from active S3 buckets to Glacier or Wasabi.

- Real-time clinical document sync — keep OneDrive and SharePoint folders in sync with an S3 compliance archive.

Job Monitoring and Alerts

RcloneView's Job History tracks every execution, including start time, duration, files transferred, errors, and completion status. Review these logs regularly as part of your quality management process.

For critical transfers, integrate with notification systems (Slack, email) to alert the team immediately if a job fails.

Getting Started

- Download RcloneView from rcloneview.com.

- Add your cloud remotes — S3 buckets, Google Cloud Storage, Azure Blob, OneDrive, or any other provider your team uses.

- Set up encrypted remotes for any storage containing PII or proprietary research data.

- Create sync jobs with checksum verification enabled for critical data transfers.

- Schedule automated backups and archive jobs to maintain compliance without manual effort.

Managing pharmaceutical data across multiple clouds does not have to mean compromising on compliance or efficiency. With RcloneView, life sciences teams get a single tool that handles everything from terabyte genomics transfers to routine document syncs, with the verification and logging capabilities that regulated environments demand.

Related Guides: